Hindsight: The Open-Source Memory System That Lets AI Agents Actually Learn (91.4% on LongMemEval)

Publicado el 18 mar 2026Actualizado el 19 mar 2026

Why Memory Is the Weakest Link in AI Agents

AI agents can reason, call tools, and orchestrate complex workflows. But ask them to remember a conversation from last week, and most start from scratch. This chronic amnesia is the Achilles' heel of agentic AI in 2026.

The problem is not the language models themselves. It is how memory has been treated in agent architectures. The dominant solution, RAG (Retrieval-Augmented Generation), was designed for one-off questions about static documents. The principle is straightforward: split documents into chunks, convert them into vectors, store them in a vector database, and retrieve the semantically closest passages when a query arrives.

This works for isolated questions. It falls apart when agents need to operate over long sessions, retain context over time, or distinguish what they have observed from what they believe. RAG treats all retrieved information uniformly: a fact learned six months ago carries the same weight as a newly formed opinion. Contradictory information coexists without reconciliation. The system cannot represent uncertainty, track how beliefs evolve, or understand why a particular conclusion was reached.

This is the context in which Vectorize launched Hindsight in December 2025: an open-source memory system designed to work like human memory. The results speak for themselves: 91.4% accuracy on the LongMemEval benchmark, the highest score ever recorded by any system.

Hindsight's Biomimetic Architecture

Hindsight's philosophy rests on a simple but powerful idea: an AI agent's memory should work like human memory, not like a search engine. Where most memory systems merely store and retrieve text fragments, Hindsight organizes information into four distinct memory types, each playing a different role in the agent's reasoning.

The Four Memory Types

Type | What it stores | Example |

|---|---|---|

World (Facts) | Objective facts about the world | "Alice works at Google as a software engineer" |

Experiences | Agent's own actions and interactions | "I recommended Python to Bob for his project" |

Opinions | Beliefs with confidence scores | "I shouldn't touch the stove again" (confidence: 0.99) |

Observations | Complex mental models derived from reflection | "Curling irons, ovens, and fire are also hot. I shouldn't touch those either." |

This separation is fundamental. It creates what researchers call "epistemic clarity": the clean distinction between what the agent knows (facts), what it has experienced (experiences), what it believes (opinions), and what it has inferred (observations). When an agent forms an opinion, the belief is stored separately from the supporting facts, accompanied by a confidence score. As new data arrives, the system can strengthen or weaken existing beliefs rather than treating all stored information with equal certainty.

Each stored fact is assigned to exactly one network and attached to a shared memory graph. This graph connects memory units through four link types: entity (same canonical entity), temporal (close in time with exponential decay), semantic (high embedding similarity), and causal (cause-effect relationships).

Entity Resolution and Temporal Awareness

Hindsight does not just store raw text. The system extracts structured facts from conversations, resolves entities (so "Alice," "Alice Chen," and "the new PM" all map to the same person), and builds a knowledge graph that captures relationships between entities, events, and concepts.

Every fact stores two distinct timestamps: when the event occurred (occurrence time) and when the agent learned about it (mention time). A fact retained in January 2025 about Alice's wedding in June 2024 can answer both "What did Alice do in 2024?" and "What did I learn recently?" Queries like "last spring" or "before the merger" are automatically parsed into date ranges.

The Three Operations: Retain, Recall, Reflect

Hindsight is built around three fundamental operations that govern the complete lifecycle of memory.

Retain: Structured Information Storage

The retain operation converts raw interactions into structured, time-aware memory. Behind the scenes, the system uses an LLM to extract key facts, temporal data, entities, and relationships. These elements pass through a normalization process that transforms extracted data into canonical entities, time series, and search indexes.

The retention pipeline processes input data by extracting narrative facts, generating embeddings, resolving entities, and constructing four types of graph links: temporal, semantic, entity, and causal.

Recall: Precise Retrieval via TEMPR

The recall operation is the heart of the retrieval system. Unlike traditional RAG systems limited to vector search, Hindsight runs four search strategies in parallel through its TEMPR system (Temporal Entity Memory Priming Retrieval).

Strategy | Best for |

|---|---|

Semantic (vector) | Conceptual similarity, paraphrasing |

Keyword (BM25) | Proper names, technical terms, exact matches |

Graph | Related entities, indirect connections, multi-hop reasoning |

Temporal | "Last spring," "in June," date ranges |

Individual results from these four searches are merged via Reciprocal Rank Fusion (RRF), then reranked by a neural cross-encoder model. The final output is trimmed to fit within the downstream LLM's token budget. The system automatically decides how to weight each strategy based on the query, without the caller needing to specify which one to use.

This multi-strategy approach explains why Hindsight outperforms single-strategy systems. A traditional RAG retrieves chunks about "Alice" or "infrastructure issues" separately. Hindsight traverses the graph: Alice, then Project Atlas, then Kubernetes, then the outage. It returns both the team structure and the incident.

Reflect: The Agent That Learns

The reflect operation is what truly sets Hindsight apart from other memory systems. It allows the agent to reason over existing memories to form new connections, which are then persisted as opinions and observations.

The reflection system is powered by CARA (Coherent Adaptive Reasoning Agents), which integrates configurable disposition parameters into the reasoning process. You can configure the agent's skepticism, literalism, or empathy on a scale of 1 to 5. This ensures reasoning consistency across sessions: without this conditioning, agents may generate locally plausible but globally inconsistent responses.

During reflection, the agent checks sources in priority order: mental models, then observations, then raw facts.

Use cases for reflect are varied and concrete:

An AI project manager reflecting on which risks need mitigation

A sales agent analyzing why certain outreach messages got responses while others did not

A support agent identifying customer questions not answered by current documentation

Opinions formed during reflection carry confidence scores that evolve over time. Supporting evidence increases confidence. Contradictions decrease it, with a doubled penalty. An agent that has been tracking a technology for months develops nuanced perspectives that fresh document retrieval can never replicate.

Benchmarks: 91.4% on LongMemEval

The LongMemEval benchmark evaluates memory systems on conversations spanning up to 1.5 million tokens across multiple sessions. It measures four core competencies: accurate retrieval, test-time learning, long-range understanding, and conflict resolution.

Comparative Results

Method | Model backbone | Overall accuracy (%) |

|---|---|---|

Full-context | OSS-20B | 39.0 |

Full-context | GPT-4o | 60.2 |

Zep | GPT-4o | 71.2 |

Supermemory | GPT-4o | 81.6 |

Supermemory | GPT-5 | 84.6 |

Supermemory | Gemini-3 | 85.2 |

Hindsight | OSS-20B | 83.6 |

Hindsight | OSS-120B | 89.0 |

Hindsight | Gemini-3 | 91.4 |

Several points deserve attention in these results.

First, Hindsight with a 20-billion-parameter open-source model (83.6%) outperforms full-context GPT-4o (60.2%) and even Zep with GPT-4o (71.2%). That is a +44.6 point improvement over the full-context baseline. This demonstrates that the bottleneck is not model size but memory architecture.

Second, Hindsight is the first open-source system to break the 90% barrier on LongMemEval. At 91.4% with Gemini-3, it surpasses even Supermemory (85.2%) using the same backbone.

On the LoCoMo benchmark, another long-term conversational memory test, Hindsight achieves 89.61% versus 75.78% for the strongest prior open system. The research paper, co-authored with collaborators from Virginia Tech and The Washington Post, details the full evaluation. Results have been independently reproduced by the Sanghani Center for Artificial Intelligence and Data Analytics at Virginia Tech.

The most dramatic gains come in multi-session queries (+211%), temporal reasoning (+316%), and knowledge updates, precisely the cases where traditional RAG systems fail.

Hindsight vs RAG vs Knowledge Graphs

To understand where Hindsight fits in the ecosystem, it helps to compare approaches.

Traditional RAG and Its Limits

Traditional RAG does one thing: semantic similarity search. It chunks documents, converts them to vectors, and retrieves the k nearest passages. The approach is stateless: the same query produces the same chunks, the same response. RAG cannot represent relationships between entities, model how information evolves over time, or track connections between facts.

As Chris Latimer, co-founder and CEO of Vectorize, puts it: "Most of the existing RAG infrastructure that people have put into place is not performing at the level that they would like it to."

Traditional Knowledge Graphs

Knowledge graphs excel at representing relationships between entities, but they are typically static. They do not handle temporal evolution well, do not distinguish facts from beliefs, and do not let agents form opinions that evolve with new evidence.

Hindsight's Hybrid Approach

Hindsight combines the best of both worlds while adding capabilities that neither RAG nor knowledge graphs possess:

Capability | Traditional RAG | Knowledge graph | Hindsight |

|---|---|---|---|

Semantic search | Yes | No | Yes |

Keyword search | No | No | Yes (BM25) |

Entity relationships | No | Yes | Yes |

Temporal reasoning | No | Limited | Yes |

Opinions with confidence | No | No | Yes |

Learning through reflection | No | No | Yes |

Entity resolution | No | Partial | Yes |

Fact/belief separation | No | No | Yes |

Installation and Practical Use Cases

Quick Start

Getting Hindsight up and running is straightforward. The recommended method uses Docker:

export OPENAI_API_KEY=your-key

docker run --rm -it --pull always -p 8888:8888 -p 9999:9999 \

-e HINDSIGHT_API_LLM_API_KEY=$OPENAI_API_KEY \

-e HINDSIGHT_API_LLM_MODEL=o3-mini \

-v $HOME/.hindsight-docker:/home/hindsight/.pg0 \

ghcr.io/vectorize-io/hindsight:latestThe system supports multiple LLM providers: OpenAI, Anthropic, Gemini, Groq, Ollama, and LM Studio. Clients are available in Python, TypeScript, and Go, plus a CLI and REST API. Hindsight is framework-agnostic: it works with CrewAI, Pydantic AI, Vercel AI SDK, LiteLLM, and any MCP-compatible system.

The Python code for interacting with the system is remarkably concise:

from hindsight_client import Hindsight

client = Hindsight(base_url="http://localhost:8888")

# Store information

client.retain(bank_id="my-bank", content="Alice works at Google")

# Retrieve memories

client.recall(bank_id="my-bank", query="What does Alice do?")

# Reflect and generate new observations

client.reflect(bank_id="my-bank", query="What should I know about Alice?")Enterprise Use Cases

Hindsight targets organizations that have already deployed RAG infrastructure but are not getting the performance they need. The system positions itself as a drop-in replacement for existing API calls.

The most relevant scenarios include:

Customer support agents that remember past interactions, identify recurring issues, and adapt responses based on complete customer history

Coding assistants that retain each developer's technical preferences and learn from their feedback

Sales agents that track prospect relationships over months, remember objections raised, and refine their approach

AI project managers that accumulate knowledge about risks, dependencies, and decisions made over time

Vectorize is also working with hyperscalers to integrate this technology into cloud platforms, partnering with cloud providers to enhance their LLMs with agent memory capabilities.

What Hindsight Changes for the Future of AI Agents

Hindsight marks a turning point in how the technical community thinks about agent memory. The project demonstrates that a well-designed memory system can transform the performance of a modest model: a 20-billion-parameter open-source model with Hindsight outperforms full-context GPT-4o. The bottleneck was never model size; it was memory quality.

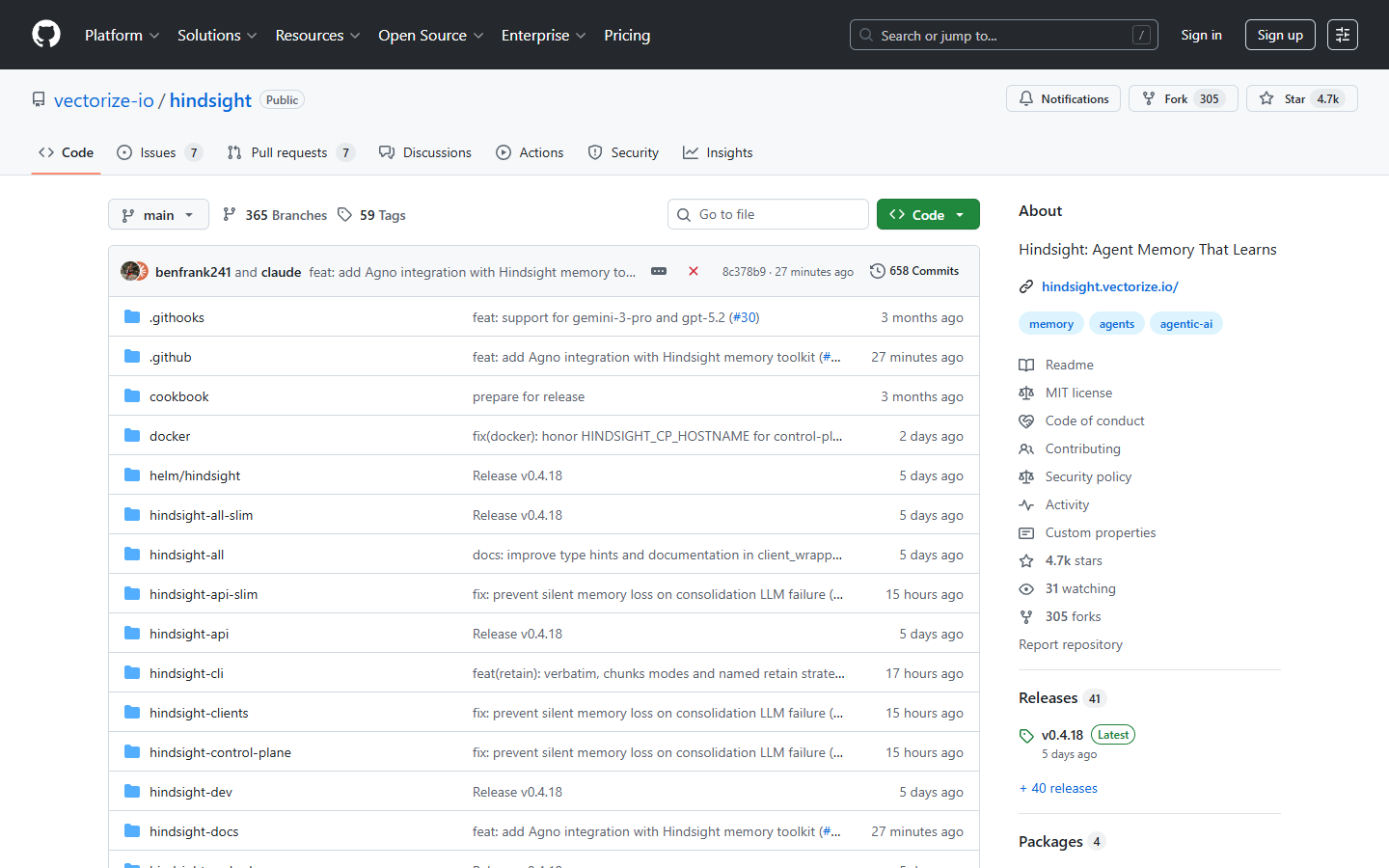

With an MIT license, 4,600 GitHub stars and growing fast, a research paper validated by Virginia Tech and The Washington Post, and an architecture that explicitly separates evidence from inference, Hindsight lays the foundation for a new generation of agents capable not just of remembering, but of genuinely learning.

The project is still young (version 0.2.1 as of January 2026), but the biomimetic approach it proposes, organizing memory into facts, experiences, opinions, and observations, offers a framework that may well become the industry standard. For teams building agents meant to operate over weeks or months, with recurring users and evolving contexts, Hindsight likely represents the most significant advance since the introduction of RAG.

Precios claros, transparentes y sin costes ocultos.

Sin compromiso, precios para ayudarte a aumentar tu prospección.

Créditos(opcional)

No necesitas créditos si solo quieres enviar emails o hacer acciones en LinkedIn

Se pueden utilizar para:

Buscar Emails

Acción IA

Buscar Números

Verificar Emails

€19por mes

1,000

5,000

10,000

50,000

100,000

1,000 Emails encontrados

1,000 Acciones IA

20 Números

4,000 Verificaciones

€19por mes

Descubre otros artículos que te pueden interesar!

Ver todos los artículosMarketing

Publicado el 24 abr 2023

Cold email ejemplo: guía completa para escribir emails que convierten (2026)

Niels Co-founder

Niels Co-founderLeer más

Software

Publicado el 7 abr 2025

7 alternativas a LEAD411 para revolucionar tu prospección B2B

Niels Co-founder

Niels Co-founderLeer más

Blog

Publicado el 25 may 2025

Las 15 mejores aplicaciones de calendario en 2026: guía completa para una planificación inteligente

Mathieu Co-founder

Mathieu Co-founderLeer más

Software

Publicado el 22 may 2025

Instalar la extensión Emelia manualmente

Niels Co-founder

Niels Co-founderLeer más

Blog

Publicado el 25 may 2025

Los 15 mejores sitios de blogs en 2026: tu guía completa para elegir la plataforma ideal

Mathieu Co-founder

Mathieu Co-founderLeer más

Blog

Publicado el 27 may 2025

Las 8 mejores aplicaciones para tomar notas en iPad en 2026

Mathieu Co-founder

Mathieu Co-founderLeer más

Enlaces útiles

HubCold-email: Guía CompletaEntregabilidad: Guía completaAlternativa a LemlistAPISolicitar una demoPrograma de afiliadosFind emailMade with ❤ for Growth Marketers by Growth Marketers

Copyright © 2026 Emelia All Rights Reserved