Voltar ao hub

Blog

IA

Fish Speech S2: The Open Source TTS Rivaling ElevenLabs (And It's Free)

Publicado em 12 de mar. de 2026Atualizado em 27 de mai. de 2026

At Emelia, we use AI to automate B2B prospecting, from cold email to data enrichment. AI-generated voice is the next frontier for automated outreach agents, and it's something Bridgers is already integrating into the AI solutions it builds for clients. Maylee, our smart email client, could also leverage TTS for voice responses. When Fish Audio releases an open source model that claims to rival ElevenLabs, we pay close attention.

On March 10, 2026, Fish Audio released Fish Speech S2, a new generation of open source text-to-speech. With over 26,000 GitHub stars, emotion control through natural language tags, multi-speaker generation in a single pass, and latency under 150 ms, the model is generating serious buzz. The question popping up everywhere on Reddit and X: is this really a free alternative to ElevenLabs?

What is Fish Speech S2: The New Open Source TTS with Emotion Control

Fish Speech S2 is a text-to-speech system developed by Fish Audio, released as open source on March 10, 2026. It succeeds Fish Speech S1, which had already claimed the top spot on the TTS-Arena2 benchmark.

What sets S2 apart from the previous generation is its fine-grained voice control. The model accepts natural language instructions embedded directly in the input text, formatted as tags in brackets. You can write cues like [whisper], [angry], [laughing nervously], or [professional broadcast tone] at the exact position where you want the tone to change. Over 15,000 unique tags are supported, and the system understands free-form descriptions, not just a fixed list.

The architecture relies on a Dual-AR (Dual Autoregressive) model: a 4-billion-parameter Slow AR predicts semantic tokens, while a 400-million-parameter Fast AR generates fine acoustic details. The whole system is trained on over 10 million hours of audio data covering 80+ languages, with reinforcement learning alignment (GRPO) to ensure consistency between expressiveness and robustness.

The benchmark results are striking. On Seed-TTS Eval, S2 achieves the best Word Error Rate among all evaluated models, including proprietary systems: 0.54% in Chinese and 0.99% in English. On the Audio Turing Test, it scores 0.515, outperforming Seed-TTS (0.417) by 24% and MiniMax-Speech (0.387) by 33%. On EmergentTTS-Eval, its overall win rate is 81.88% against gpt-4o-mini-tts, the highest among all evaluated models, including closed-source systems from Google and OpenAI.

The model natively supports multi-speaker and multi-turn generation in a single pass, allowing you to create complete dialogues between multiple characters without generating each voice separately.

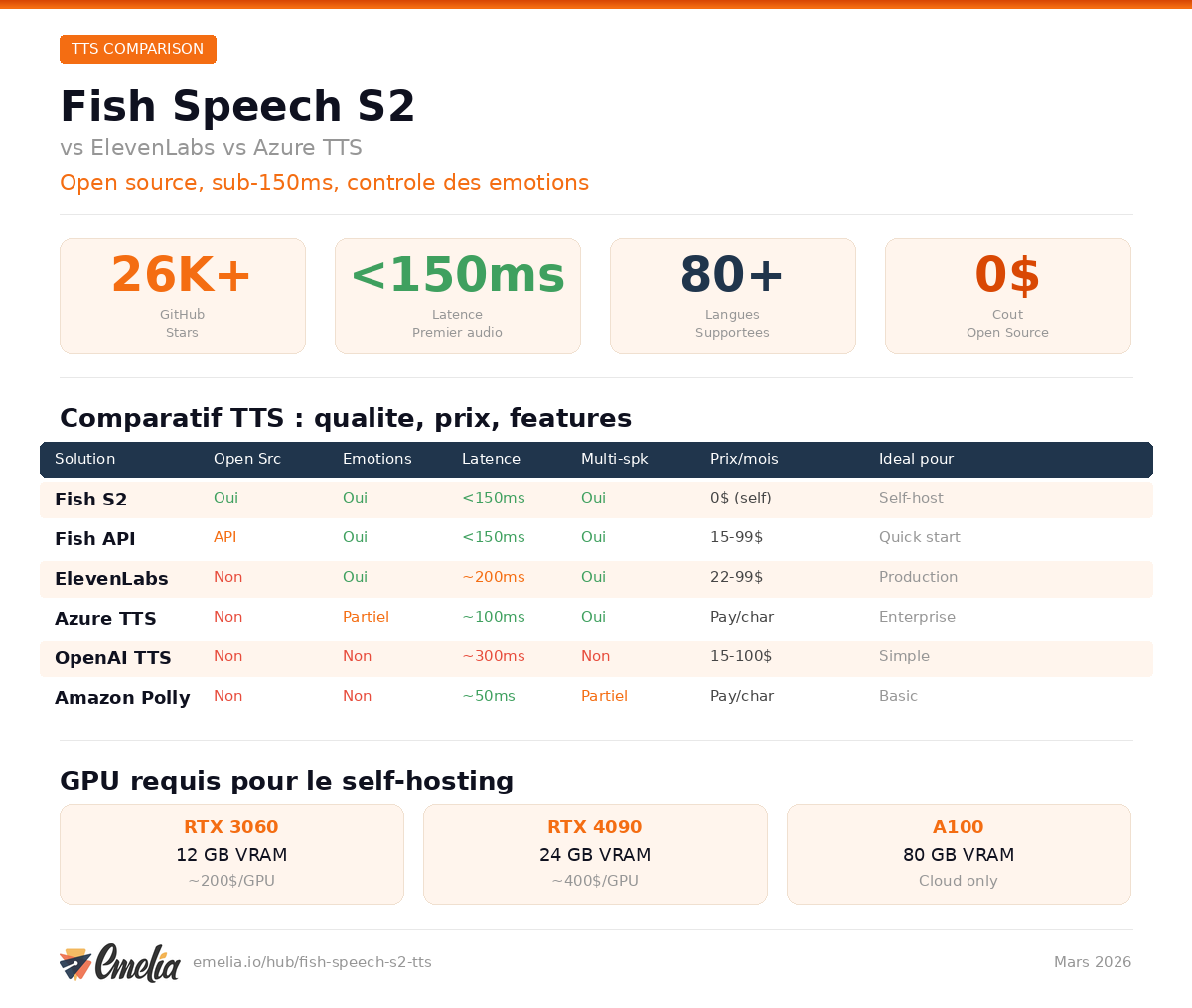

Fish Speech S2 vs ElevenLabs vs Azure TTS: Quality, Price and Latency Compared

Here is the full comparison of the major TTS solutions available in 2026.

TTS Solution | Open Source | Emotion Control | Latency | Multi-Speaker | Price | Best For |

|---|---|---|---|---|---|---|

Fish Speech S2 | Yes (weights + code) | Yes, natural language tags (15,000+) | < 150 ms | Yes, native single-pass | Free (self-host) or API from $0/mo | Developers, startups, agencies |

ElevenLabs | No | Limited (preset styles) | ~ 500 ms | Yes (separate API) | $5 to $1,320/mo | Content creators, production |

Azure TTS | No | Preset styles (SSML) | ~ 200 ms | Yes (SSML) | ~ $15/million chars (neural) | Enterprises, Microsoft stack |

Google Cloud TTS | No | No (Chirp 3 limited) | ~ 300 ms | No | $4 to $30/million chars | Google Cloud apps |

OpenAI TTS | No | Limited | ~ 500 ms | No | $15 to $30/million chars | GPT integration |

Amazon Polly | No | No | ~ 200 ms | No | $4 to $30/million chars | AWS ecosystem |

The price difference is dramatic. ElevenLabs charges $99/month for its Pro plan (500,000 credits) and goes up to $1,320/month for the Business plan. Fish Audio offers a free tier with 7 minutes of S2 generation, a Plus plan at $11/month with 200 minutes, and a Pro plan at $75/month with 27 hours of generation. The self-hosting option is entirely free if you have the required GPU.

According to Fish Audio's official comparison page, the estimated cost per minute is $0.05 for Fish Audio versus $0.18 for ElevenLabs, roughly 70% cheaper.

On quality, Fish Speech S2 outperforms closed-source models on standardized benchmarks. In practice, ElevenLabs retains an edge on certain highly natural English voices, but S2 excels at expressive control and multilingual coverage, with particularly impressive results for non-English languages.

How to Install Fish Speech S2 Locally: GPU Requirements and Step-by-Step Setup

Hardware Requirements

Use Case | Recommended GPU | VRAM | Expected Speed |

|---|---|---|---|

Development | RTX 3060 | 12 GB | ~ 1:15 real-time factor |

Production | RTX 4090 | 24 GB | ~ 1:7 real-time factor |

Enterprise | A100 / H200 | 40 GB+ | ~ 1:5 real-time factor |

The absolute minimum is 12 GB of VRAM. For production use, 24 GB is recommended. The model weighs approximately 9 GB on disk and consumes about 17 GB of VRAM during inference based on community testing.

Step-by-Step Installation

1. System prerequisites (Linux or WSL)

apt install portaudio19-dev libsox-dev ffmpeg 2. Create a Python environment

conda create -n fish-speech python=3.12 conda activate fish-speech 3. Clone the repository and install

git clone https://github.com/fishaudio/fish-speech.git cd fish-speech pip install -e .[cu129] # Adjust for your CUDA version 4. Download the S2 Pro model

huggingface-cli login huggingface-cli download fishaudio/s2-pro --local-dir checkpoints/s2-pro 5. Launch the web interface

python tools/run_webui.py The Gradio interface is then accessible at http://localhost:7860.

For Docker users, a pre-built image is available:

git clone https://github.com/fishaudio/fish-speech.git cd fish-speech docker compose --profile webui up Adding the COMPILE=1 environment variable enables torch.compile and provides a significant inference speed boost.

Emotion Control via Tags: [whisper], [angry], [laugh] and How It Works

The expressive control system in Fish Speech S2 works by injecting natural language tags directly into the text to be synthesized. Unlike traditional systems that use rigid SSML markup or predefined styles, S2 accepts free-form descriptions.

Here is how it works in practice. You write:

[professional broadcast tone] Welcome to today's episode. [whispers] But first, a secret. [laughing nervously] I probably shouldn't tell you this. [angry] This is completely unacceptable! The model interprets each tag and adjusts tone, pacing, intonation, and vocal effects in real time.

The available tag categories include:

Basic emotions: (angry), (sad), (excited), (happy), (fearful)

Advanced emotions: (disdainful), (unhappy), (anxious), (sarcastic)

Tones: (in a hurry tone), (shouting), (whispering), (professional broadcast tone)

Special effects: (laughing), (sobbing), (sighing), (inhale)

On the Fish Audio Instruction Benchmark, the model achieves a tag activation rate of 93.3% and a quality score of 4.51 out of 5.0, as evaluated by Gemini 3 Pro. The win rate on EmergentTTS-Eval for paralinguistics reaches 91.61%, confirming that emotional control is S2's primary strength.

Because the tags are free-form natural language, you can experiment with creative descriptions. [pitch up slightly while maintaining warmth] is a valid instruction. The system is not limited to a closed list.

Self-Hosting vs Fish Audio API: Real Costs and Performance

Two options are available for using Fish Speech S2: self-hosting (running the model on your own hardware) or using Fish Audio's hosted API.

Self-hosting: Real Costs

Self-hosting is "free" in terms of licensing, but the GPU has a cost. Here is a realistic estimate:

Option | Estimated Monthly Cost | Performance |

|---|---|---|

Cloud GPU (RTX 4090, e.g. RunPod) | $300 to $500/mo | RTF ~1:7, decent latency |

Cloud GPU (A100 40 GB) | $800 to $1,200/mo | RTF ~1:5, very good |

Cloud GPU (H200) | $1,500 to $2,500/mo | RTF 0.195, production-ready |

Personal GPU (RTX 4090 purchased) | ~ $1,600 one-time + electricity | RTF ~1:7, no recurring fees |

For comparison, an ElevenLabs Pro plan at $99/month provides 500,000 credits, equivalent to approximately 8 hours of audio generation. If your volume is low to moderate, the ElevenLabs or Fish Audio API remains more cost-effective than self-hosting. Self-hosting becomes worthwhile at high volume, typically several hours of generation per day.

Fish Audio API: Plans and Pricing

Fish Audio offers a hosted API at fish.audio with accessible pricing:

Free: 7 minutes of S2 generation, 8,000 monthly credits

Plus ($11/mo): 200 minutes, API access, commercial use

Pro ($75/mo): 27 hours, priority, 30,000 characters per generation

The API is straightforward to integrate:

```python from fishaudio import FishAudio from fishaudio.utils import save

client = FishAudio(api_key="your_api_key") audio = client.tts.convert( text="Hello, this is a text-to-speech test.", model="s2-pro" ) save(audio, "output.mp3") ```

For most use cases, the Fish Audio API offers the best cost-to-performance ratio. Self-hosting makes sense for businesses with high volumes or strict data privacy requirements.

Use Cases: Voiceover, Podcasts, Call Centers, AI Sales Agents

Content Creators and Podcasters

Fish Speech S2 lets you generate studio-quality voiceovers for free when running locally. For a YouTuber producing videos regularly, TTS costs can run several hundred dollars per month on ElevenLabs. With S2 self-hosted on an RTX 4090, that cost disappears after the initial investment. Emotion control adds life to narrations: an enthusiastic tone for intros, a whisper for suspenseful moments, a laugh mid-sentence for a casual podcast feel.

The multi-speaker generation feature is particularly valuable for podcasters. Instead of generating each speaker separately and manually editing them together, S2 can produce a complete two-person dialogue in a single pass, maintaining distinct voice characteristics and natural conversational flow. For audiobook narrators, the ability to switch between character voices without post-production splicing saves hours of editing time.

Sales Teams and AI Voice Agents for Outreach

This is a use case we care about directly at Emelia. AI voice agents for phone-based prospecting require fast TTS (under 200 ms latency for natural conversation), expressive output (tone matters in sales), and affordable scaling. Fish Speech S2 checks all three boxes. With latency under 150 ms and tone control, you can build an agent that adapts its voice based on conversation context.

Imagine an AI sales agent that starts with a [warm, friendly tone] for the introduction, shifts to a [confident, professional tone] when presenting the value proposition, and uses a [calm, reassuring tone] when handling objections. This level of vocal direction was previously only possible with human callers. For teams running thousands of outreach calls per day, the cost savings compared to ElevenLabs at scale are substantial.

Call Centers and Automated Customer Service

For call centers, TTS needs to be reliable, multilingual, and capable of handling thousands of concurrent requests. S2's SGLang inference engine, with continuous batching and paged KV cache, is designed for this type of load. On a single H200 GPU, throughput reaches over 3,000 acoustic tokens per second. For a company operating across multiple countries, support for 80+ languages is a significant advantage.

The prefix caching feature deserves special attention for call center deployments. When the same voice is reused across multiple requests (which is the norm in customer service), SGLang's Radix tree caches the corresponding KV states. Fish Audio reports an average prefix-cache hit rate of 86.4%, with peaks above 90%. This means repeated requests largely skip the voice-loading stage, making the system extremely efficient at handling high concurrency with consistent voice quality.

Developers and App Integration

The unified API and open source nature of S2 make integration straightforward. You can deploy the model on your own infrastructure, fine-tune it on your specific data, and integrate it without vendor lock-in. The Dual-AR architecture, structurally isomorphic to standard LLMs, inherits all existing serving optimizations (SGLang, vLLM), which simplifies deployment.

The release includes not just model weights but also the complete fine-tuning code, meaning you can train the model on domain-specific data. A legal tech company could fine-tune S2 on legal terminology pronunciation. A medical platform could train it on drug names and medical terms. This level of customization is simply not available with closed-source alternatives like ElevenLabs or OpenAI TTS.

Agencies Creating Voice Content for Clients

For an agency like Bridgers, Fish Speech S2 opens up possibilities for large-scale voice content creation: ad narrations, multilingual dubbing, rapid voice prototyping for client projects. The Pro plan at $75/month provides 27 hours of generation, covering most monthly needs for an agency.

Limitations of Fish Speech S2: What Doesn't Work Yet

Fish Speech S2 is impressive, but it is not perfect. Here are the limitations to know before adopting it.

Non-commercial license for model weights. This is the most important point. While the code is Apache-licensed, the model weights are under the Fish Audio Research License. Commercial use requires a separate license from Fish Audio. This means it is not fully "free" for professional use, as several commentators on Reddit have pointed out.

GPU cost for self-hosting. The 12 GB VRAM minimum excludes entry-level consumer GPUs. For production use, 24 GB of VRAM is recommended, which means an RTX 4090 or equivalent. For smaller organizations, the hardware investment can be prohibitive.

Quality vs ElevenLabs on nuanced English speech. While S2 outperforms ElevenLabs on standardized benchmarks, some users report that ElevenLabs retains an edge in pure naturalness for certain highly specific English voices. The gap is narrowing, but it still exists for the most demanding use cases.

Uneven language coverage. Tier 1 languages (Japanese, English, Chinese) receive the best quality. French, German, and Spanish are Tier 2, with slightly lower quality. For rarer languages, results can be inconsistent.

No native macOS support. Installation requires Linux or WSL. Mac users need Docker or WSL, adding a layer of complexity.

No official quantized version. Some Reddit users report lacking the VRAM needed and are waiting for a quantized version. Fish Audio has not yet released a lighter version for GPUs with less than 16 GB.

Inference speed on consumer hardware. While the benchmarks showcase impressive RTF numbers on high-end datacenter GPUs like the H200, consumer hardware tells a different story. On an RTX 3060 with 12 GB VRAM, expect a real-time factor around 1:15, meaning one minute of audio takes roughly 15 seconds to generate. On an RTX 4090, this drops to about 1:7. For real-time conversational applications, anything below an RTX 4090 may struggle to maintain natural conversation flow.

Verdict: Who Should Use Fish Speech S2?

Fish Speech S2 is the most advanced open source TTS model available in March 2026. Its emotional control via natural language tags, native multi-speaker generation, and state-of-the-art benchmark performance make it a serious contender against proprietary solutions.

Use Fish Speech S2 if you are: a developer integrating TTS into an application, a startup looking to minimize costs, an agency producing voice content, or a business with multilingual needs.

Stay with ElevenLabs if: you need the simplicity of a turnkey service, your volume is low (the $5/month Starter plan is enough), or you do not want to manage infrastructure.

Consider Azure TTS or Amazon Polly if: you are already in the Microsoft or AWS ecosystem and need native integration.

Open source TTS just crossed a major threshold. Fish Speech S2 does not just catch up with paid solutions; it surpasses them on several measurable criteria. The only question remaining: how long before this level of quality becomes the free standard for everyone?

Preços claros, transparentes e sem custos ocultos.

Sem compromisso, preços para ajudá-lo a aumentar sua prospecção.

Créditos(opcional)

Você não precisa de créditos se você quiser apenas enviar e-mails ou fazer ações no LinkedIn

Podem ser usados para:

Encontrar E-mails

Ação de IA

Encontrar Números

Verificar E-mails

€19por mês

1,000

5,000

10,000

50,000

100,000

1,000 E-mails encontrados

1,000 Ações de IA

20 Números

4,000 Verificações

€19por mês

Descubra outros artigos que podem lhe interessar!

Ver todos os artigosSoftware

Publicado em 14 de mai. de 2024

7 alternativas ao Folderly para melhorar sua capacidade de entrega em 2026

Marie Head Of Sales

Marie Head Of SalesLeia mais

Blog

Publicado em 5 de abr. de 2025

FullEnrich: opiniões, preços e alternativas para evitar surpresas desagradáveis

Mathieu Co-founder

Mathieu Co-founderLeia mais

Software

Publicado em 14 de jul. de 2024

6 alternativas ao Skylead para gastar menos e melhorar sua geração de leads

Marie Head Of Sales

Marie Head Of SalesLeia mais

Software

Publicado em 25 de mai. de 2025

Os 15 melhores softwares gratuitos para substituir o Photoshop

Mathieu Co-founder

Mathieu Co-founderLeia mais

Software

Publicado em 7 de ago. de 2024

Lemlist vs Waalaxy 2026: qual ferramenta LinkedIn escolher?

Marie Head Of Sales

Marie Head Of SalesLeia mais

Software

Publicado em 2 de mai. de 2024

7 melhores alternativas ao Lemlist: o guia definitivo de 2026

Marie Head Of Sales

Marie Head Of SalesLeia mais

Links úteis

HubCold-email: Guia CompletoEntregabilidade: Guia completoAlternativa ao LemlistAPISolicitar uma demonstraçãoPrograma de afiliadosFind emailMade with ❤ for Growth Marketers by Growth Marketers

Copyright © 2026 Emelia All Rights Reserved