Zurück zum Hub

Blog

KI

Claude Code Review: $25 Per Review, Is It Worth It?

Veröffentlicht am 11. März 2026

Anthropic just launched Code Review in Claude Code, a multi-agent system that automatically scans every pull request for bugs. The price tag? Between $15 and $25 per review. At Bridgers, our digital and AI agency, we build solutions with Claude Code daily for our clients. And at Emelia, our B2B prospecting SaaS, we use Claude Code to accelerate product development. So when Anthropic ships a tool that claims to detect 84% of real bugs in PRs, we test it immediately. Here is our complete analysis.

What Is Claude Code Review and How Does It Work?

Claude Code Review is an automated code review tool launched on March 9, 2026, by Anthropic. Unlike traditional linters or style checkers, it focuses exclusively on logic errors, regression bugs, and security vulnerabilities.

The core innovation is a multi-agent architecture. When a pull request opens on GitHub, Claude Code Review deploys a team of AI agents working in parallel. Each agent examines the code from a different angle: one hunts for logic bugs, another checks security, a third analyzes potential regressions. A final agent aggregates all results, removes duplicates, filters false positives, and ranks issues by severity.

The output is a single summary comment directly in the GitHub PR, along with precise inline comments on problematic lines. Each detected bug comes with a step-by-step explanation: what the issue is, why it is risky, and how to fix it.

Cat Wu, Anthropic's head of product, described the severity system to TechCrunch: red labels mark the most critical issues, yellow flags potential problems, and purple highlights pre-existing bugs in historical code.

A typical review takes about 20 minutes, which is significantly slower than tools like CodeRabbit (about 2 minutes), but Anthropic is deliberate about this trade-off: depth over speed.

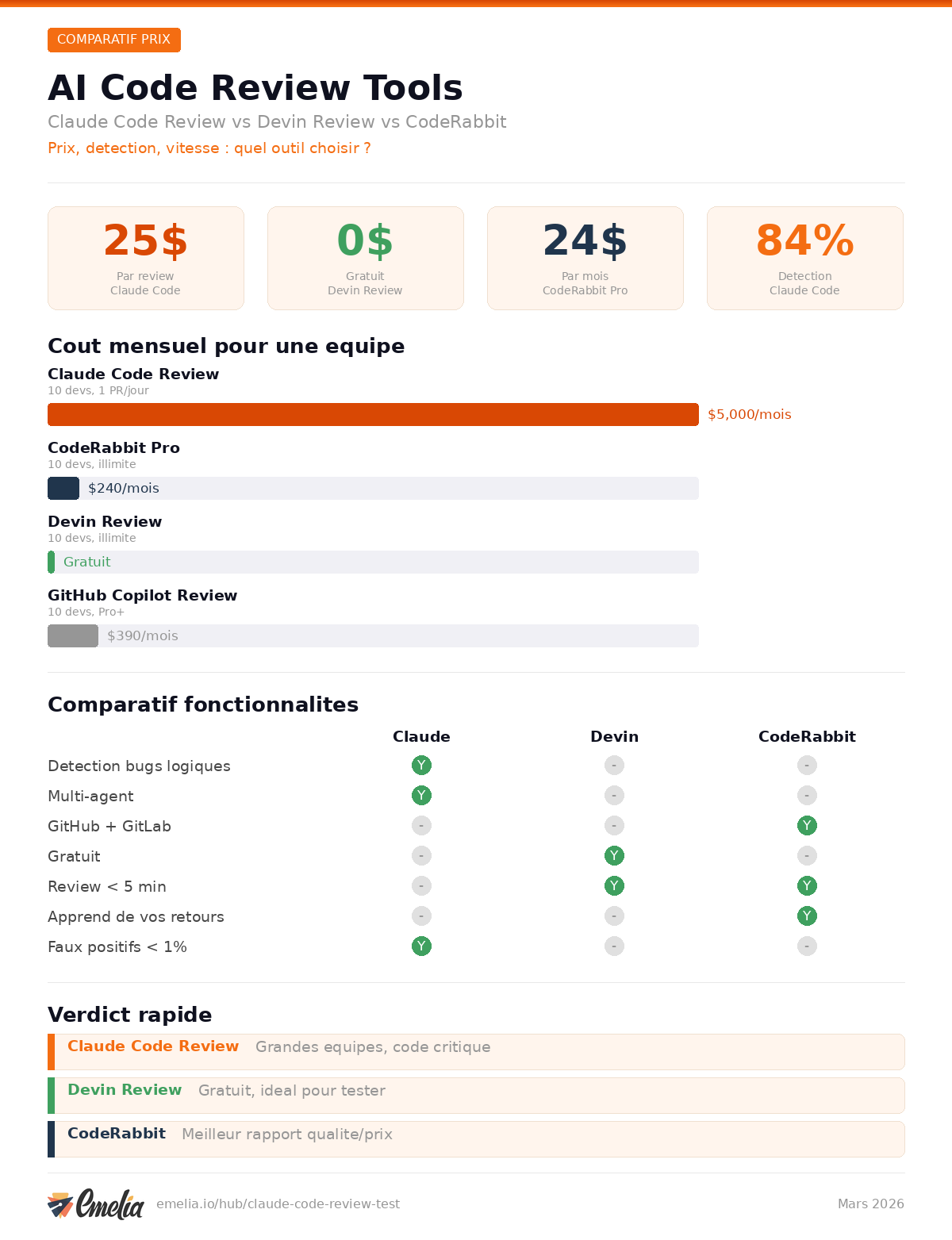

Claude Code Review Pricing: How Much Does It Really Cost?

This is where it gets controversial. Claude Code Review charges per use, based on token consumption. On average, each review costs between $15 and $25, depending on PR size and complexity.

Let's do the math. According to ZDNET, an engineer using Claude Code typically produces about 5 PRs per day. A traditional developer submits 1 to 2 per week. For a company with 100 developers each submitting one PR per day, 5 days a week, the monthly cost reaches roughly $40,000, or $480,000 per year.

That is a significant budget. But Anthropic is explicitly targeting large enterprises: Uber, Salesforce, and Accenture are among the named customers. For these organizations, the cost of a critical production bug can far exceed the price of thousands of automated reviews.

To manage spending, Anthropic provides several controls:

Monthly organization caps to define total spending across reviews

Repository-level controls to enable reviews only on selected repositories

Analytics dashboard to track reviewed PRs, acceptance rates, and costs

Claude Code Review is available only on Teams and Enterprise plans. If you are on a Pro or Free plan, you cannot access it.

The Numbers: 84% Detection Rate, Less Than 1% False Positives

Anthropic has been running Code Review internally for months. The results, detailed in their analysis, are striking:

PRs receiving substantive review comments jumped from 16% to 54%

On large PRs (over 1,000 lines changed), 84% surface findings, averaging 7.5 issues per PR

On small PRs (under 50 lines), 31% receive findings

Less than 1% of findings are marked incorrect by engineers

Engineer productivity increased by 200% over the past year

A concrete example: during an early access test with TrueNAS, Code Review detected a latent type mismatch bug that was silently wiping an encryption key cache. A human scanning the diff would not have naturally looked in that area.

Critically, Claude Code Review never approves PRs. Approval stays human. It is an assistive tool, not a replacement.

Another telling example: in one instance, Code Review caught a single-line change that would have broken production authentication. The change looked innocuous in the diff, but the multi-agent system traced the downstream impact and flagged it before merge. This is the kind of deep, context-aware analysis that separates Code Review from simpler tools that only look at the changed lines in isolation.

Claude Code Review vs Devin Review: Free Alternative?

The most direct competitor is Devin Review, launched in January 2026 by Cognition (creators of the Devin AI agent). The price difference is radical: Devin Review is free during its early access phase.

Devin Review works differently. Rather than a multi-agent approach, it intelligently reorganizes diffs to make them more understandable. It groups logically connected changes, explains each block, and offers an interactive chat to ask questions about the code.

Using it is simple: swap "github" for "devinreview" in any PR URL. No account needed for public repos.

Devin Review's bug detection uses a color-coded severity system: red (probable bugs), orange (warnings), gray (informational). The approach is less deep than Claude Code Review but much faster and more accessible.

Cognition's official announcement emphasizes that Devin Review is built to "scale human understanding of ever-more-complex code diffs" with AI and UX combined, not to replace human judgment.

Comparison: Claude Code Review vs Devin Review vs CodeRabbit vs GitHub Copilot

Feature | Claude Code Review | Devin Review | CodeRabbit | GitHub Copilot Review |

|---|---|---|---|---|

Price | $15-25/review | Free (early access) | $12-24/mo/dev | Included in Pro+ ($39/mo) |

Detection rate | 84% (large PRs) | Not disclosed | Not disclosed | Not disclosed |

False positives | Less than 1% | Color-coded severity | Self-improving learnings | Not disclosed |

GitHub integration | Yes (automatic) | Yes (URL swap or npx) | GitHub, GitLab, Bitbucket | Native GitHub |

Plan required | Teams/Enterprise | Free for all | Free to Enterprise | Pro+ or Business |

Multi-agent | Yes | No | No | No |

Review time | ~20 minutes | A few minutes | ~2 minutes | Variable |

Approves PRs | No | No | No | No |

Languages | All | All | All | All |

Focus | Deep logic bugs | Diff organization and understanding | Full review + linting | General review |

CodeRabbit deserves special attention. At $12/month on the Lite plan and $24/month on Pro, it is the most cost-effective option for continuous use. It supports GitHub, GitLab, and Bitbucket, offers one-click fix suggestions, and learns from your feedback to improve over time. For small and mid-sized teams, it is likely the best value.

GitHub Copilot also includes code review features in its Pro+ ($39/month) and Business ($19/user/month) plans, with access to premium models including Claude. It is the most integrated option if your team already lives in the GitHub ecosystem.

How to Set Up Claude Code Review with GitHub

Setup is straightforward for administrators on Teams or Enterprise plans:

1. Enable Code Review in Claude Code settings

Log into your Claude Code dashboard with an admin account. Navigate to settings and enable the Code Review feature.

2. Install the GitHub App

Claude Code Review requires installing a GitHub app on your organization. The app requests read and write permissions for contents, issues, and pull requests.

3. Select repositories

Choose which repositories should have automatic reviews enabled. This is critical for cost control: start with your most critical repos only.

4. Set spending caps

Define a maximum monthly budget to avoid surprises. Use the analytics dashboard to monitor costs in real time.

5. You are ready

Once configured, Claude Code Review triggers automatically on every new PR. Developers do not need to do anything: comments appear directly in GitHub.

For advanced customization, you can configure a CLAUDE.md file at the root of your repository to define coding rules, review standards, and project-specific best practices. This is the same configuration file used by Claude Code for coding, which means your review rules stay consistent with your development guidelines.

A key detail: unlike the Claude Code GitHub Action (which is open source and free, but lighter in scope), Code Review runs automatically without any developer action. The GitHub Action requires an @claude mention to trigger and is better suited for ad-hoc requests. Code Review is the always-on, deep-analysis layer that runs on every single PR.

Best AI Code Review Tool in 2026

The AI code review market is exploding in 2026. Developer AI adoption has reached 84%, and AI-assisted commits now represent 41% of all commits. Monthly merged PRs exceed 43 million globally.

In this context, here is our verdict by profile:

For large enterprises (100+ devs): Claude Code Review is the premium choice. The cost is justified by depth of analysis, the 84% detection rate, and seamless integration. If your teams already use Claude Code for coding, this is the logical next step.

For mid-sized teams (10-50 devs): CodeRabbit offers the best balance. At $24/month per developer, costs are predictable, and multi-platform support (GitHub, GitLab, Bitbucket) is a major advantage.

For startups and solo developers: Devin Review is unbeatable right now. Free, no account required for public repos, with a UX designed to quickly understand complex diffs.

For teams already on GitHub: GitHub Copilot Pro+ is the most integrated solution. At $39/month, you get code review plus all other Copilot features.

The Controversy: Anthropic Gets You Addicted, Then Charges You

The sharpest criticism of Claude Code Review boils down to one sentence: "Anthropic gets you addicted to Claude Code writing bad code, then charges you to review it."

There is a kernel of truth here. Claude Code generates code at breakneck speed, boosting productivity by 200%. But that speed creates a volume of PRs that human teams can no longer absorb. The solution? Pay Anthropic to review the code that Anthropic helped write.

On LinkedIn, one comment captured the tension perfectly: "Same Claude will generate code and same Claude will review. And if issues are found in review, what does it mean for the original code generated?"

The parallel to other industries is striking. It is like a car manufacturer selling vehicles with mediocre brakes, then offering a premium brake inspection service.

Anthropic's response is that Code Review is not limited to Claude-generated code: it reviews all code, including human-written code. And the results speak for themselves: less than 1% false positives, 84% detection on large PRs.

The financial context adds fuel to the debate. Claude Code now generates an annualized revenue of $2.5 billion according to Forbes, having doubled since early 2026. Anthropic's total annualized revenue has reached $14 billion. Code Review is clearly a new revenue stream for the company.

Claude Code Review Limitations

Despite its performance, Claude Code Review has important limitations to know:

Unpredictable costs. Unlike CodeRabbit (fixed monthly pricing), usage-based billing can create surprises. A large, complex PR can cost well above $25.

It is slow. 20 minutes per review is an eternity compared to CodeRabbit's 2 minutes or a linter's seconds. For teams that merge fast, this is a bottleneck.

Enterprise only. No individual plan, no Pro plan. If you are not on Teams or Enterprise, you cannot use it.

Not a full security tool. Claude Code Review provides light security analysis. For deep security scanning, Anthropic recommends Claude Code Security, a separate product.

Research preview may change. The product is in research preview. Features, accuracy, and pricing may evolve.

GitHub only. No GitLab or Bitbucket integration yet. If your code is not on GitHub, Code Review is not for you.

Real-World Use Cases for Claude Code Review

To understand the real value of Claude Code Review, you need to look beyond marketing numbers and examine concrete scenarios.

Scenario 1: Massive refactoring. Your team is migrating a monolith to microservices. Each PR touches hundreds of files. A human reviewing that for an hour will inevitably miss things. Claude Code Review deploys multiple agents scrutinizing every dimension: one verifies interface consistency, another tracks regressions, a third ensures cross-service calls are correct. This is exactly the use case where 20 minutes and $25 pay for themselves.

Scenario 2: The vibe-coding team. Five junior developers use Claude Code to generate code at full speed. The lead developer is drowning in PRs to review. Enabling Code Review on the main repo unblocks the bottleneck: every PR arrives pre-analyzed with critical bugs already identified. The lead can focus on architectural decisions instead of hunting null pointer exceptions.

Scenario 3: Regulatory compliance. In banking, healthcare, or defense, every deployed line of code must be traced and reviewed. Claude Code Review provides an automated, detailed report for each PR, creating a permanent audit trail. At $25 per review, that is negligible compared to the cost of a failed compliance audit.

Scenario 4: Open source maintenance. This is where it falls short. If you maintain an open source project, Claude Code Review is not built for you. The Teams plan is designed for paying organizations, and per-review pricing is prohibitive for community projects. Devin Review or CodeRabbit Free are far better options.

Who Is Claude Code Review For?

It is for you if:

Your team of 50+ developers already uses Claude Code

You have hundreds of PRs per week getting only a quick "LGTM"

The cost of a production bug far exceeds $25

You are on an Anthropic Teams or Enterprise plan

It is not for you if:

You are a solo developer or small team

Your devtools budget is tight

You need instant reviews

Your code lives on GitLab or Bitbucket

Why Anthropic Is Launching Code Review Now

To understand Claude Code Review, you need to understand the moment. Anthropic is no longer just another AI startup. With an annualized revenue of $14 billion according to the Economic Times, it is the fastest-growing software company in history. Claude Code alone represents $2.5 billion in run rate, having doubled in just six weeks since January 2026.

Over 500 organizations now pay more than $1 million per year for the Claude suite. Enterprise customers spending over $100,000 annually have grown 7x in one year. Business subscriptions to Claude Code have quadrupled since January.

In this context, Code Review is not just a feature: it is a natural extension of the ecosystem. Anthropic identified that its own engineers' productivity increased by 200%, creating an explosion of PRs. Human code review could not keep up. The problem they solved internally, they are now productizing for their enterprise customers.

The timing is also strategic. Cognition launched Devin Review in January, CodeRabbit continues to grow, and GitHub Copilot keeps adding review capabilities. Anthropic needed to fill this gap in its offering before customers went looking for third-party solutions.

Claude Code Review is an ambitious product addressing a real problem: the code review bottleneck in the age of AI. The pricing is debatable, free alternatives exist, but for enterprises that measure the real cost of production bugs, $25 per review might just be a bargain.

Klare, transparente Preise ohne versteckte Kosten.

Keine Verpflichtung, Preise, die Ihnen helfen, Ihre Akquise zu steigern.

Credits(optional)

Sie benötigen keine Credits, wenn Sie nur E-Mails senden oder auf LinkedIn-Aktionen ausführen möchten

Können verwendet werden für:

E-Mails finden

KI-Aktion

Nummern finden

E-Mails verifizieren

€19pro Monat

1,000

5,000

10,000

50,000

100,000

1,000 Gefundene E-Mails

1,000 KI-Aktionen

20 Nummern

4,000 Verifizierungen

€19pro Monat

Entdecken Sie andere Artikel, die Sie interessieren könnten!

Alle Artikel ansehenBlog

Veröffentlicht am 5. Apr. 2025

FullEnrich: Bewertungen, Preise und Alternativen, um böse Überraschungen zu vermeiden

Mathieu Co-founder

Mathieu Co-founderWeiterlesen

Software

Veröffentlicht am 11. Juli 2024

7 Alternativen zu Expandi, um Ihre Akquisitionskosten zu senken

Marie Head Of Sales

Marie Head Of SalesWeiterlesen

Software

Veröffentlicht am 22. Apr. 2024

Die 5 besten Alternativen zu Dropcontact für eine bessere B2B-Kundenakquise

Marie Head Of Sales

Marie Head Of SalesWeiterlesen

Software

Veröffentlicht am 4. Juni 2024

Die 6 besten Alternativen zu GetProspect, um Ihre Kundenakquise anzukurbeln

Marie Head Of Sales

Marie Head Of SalesWeiterlesen

Software

Veröffentlicht am 31. März 2025

9 Alternativen zu UpLead, um Ihre Kundenakquise WIRKLICH anzukurbeln

Niels Co-founder

Niels Co-founderWeiterlesen

Software

Veröffentlicht am 26. Apr. 2024

Email Finder 2026: Die 9 besten Hunter.io-Alternativen

Marie Head Of Sales

Marie Head Of SalesWeiterlesen

Made with ❤ for Growth Marketers by Growth Marketers

Copyright © 2026 Emelia All Rights Reserved