OpenViking: ByteDance's Open-Source Context Database That Gives AI Agents Real Memory

Veröffentlicht am 18. März 2026Aktualisiert am 27. Mai 2026

The Fundamental Memory Problem in AI Agents

AI agents have a memory problem. Not a minor inconvenience, but a structural flaw. You can connect the most powerful model on the market to every tool imaginable, give it access to your files, emails, and calendars. Ten minutes into a complex task, it forgets the constraints you set at the beginning. It hallucinates details. It loses the thread.

Developers sometimes call this the "goldfish problem." Large language models, however impressive, operate within a limited context window. When information volume exceeds that window, you face a painful choice: either starve the model of context and it cannot reason properly, or flood it with data and it drowns in noise, driving up token costs while degrading output quality.

The standard solution has been RAG (Retrieval-Augmented Generation). On paper, it sounds elegant: chunk your documents, vectorize them, retrieve the relevant fragments when the model needs them. In practice, the results are often disappointing. Developers use the term "vector soup" to describe what happens when you dump PDF contracts, Python code, Slack logs, and images into the same flat vector space. The system finds keywords, but it loses structure, hierarchy, and the relationships between pieces of information.

This is exactly the problem that OpenViking, an open-source project from ByteDance's Volcano Engine team, claims to solve. And the approach is radically different from anything else on the market.

OpenViking: A Context Database, Not a Vector Database

OpenViking does not position itself as yet another vector database. The ByteDance team calls it a "Context Database," a database designed specifically for AI agents. The distinction is not cosmetic. It reflects a fundamentally different architectural philosophy.

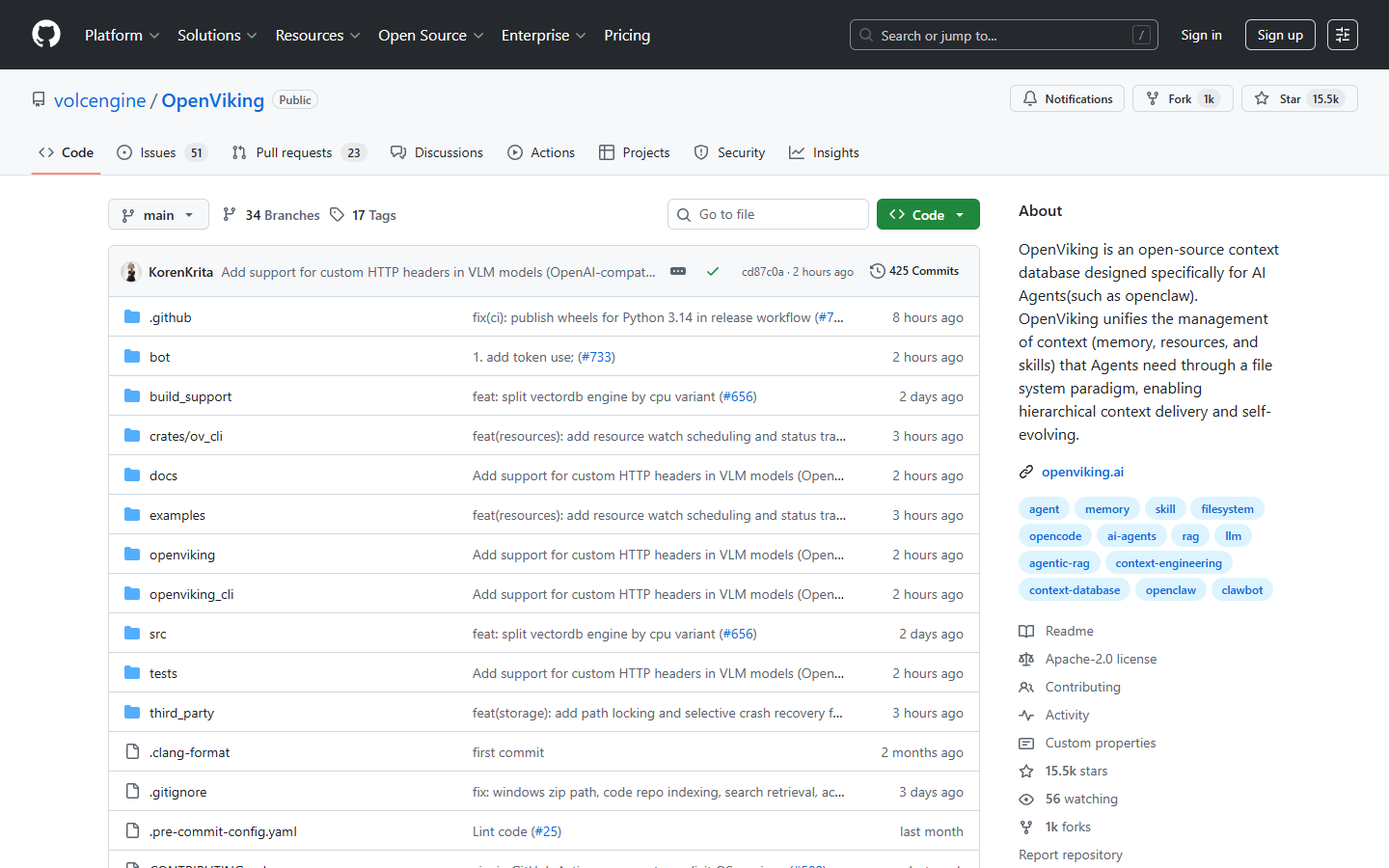

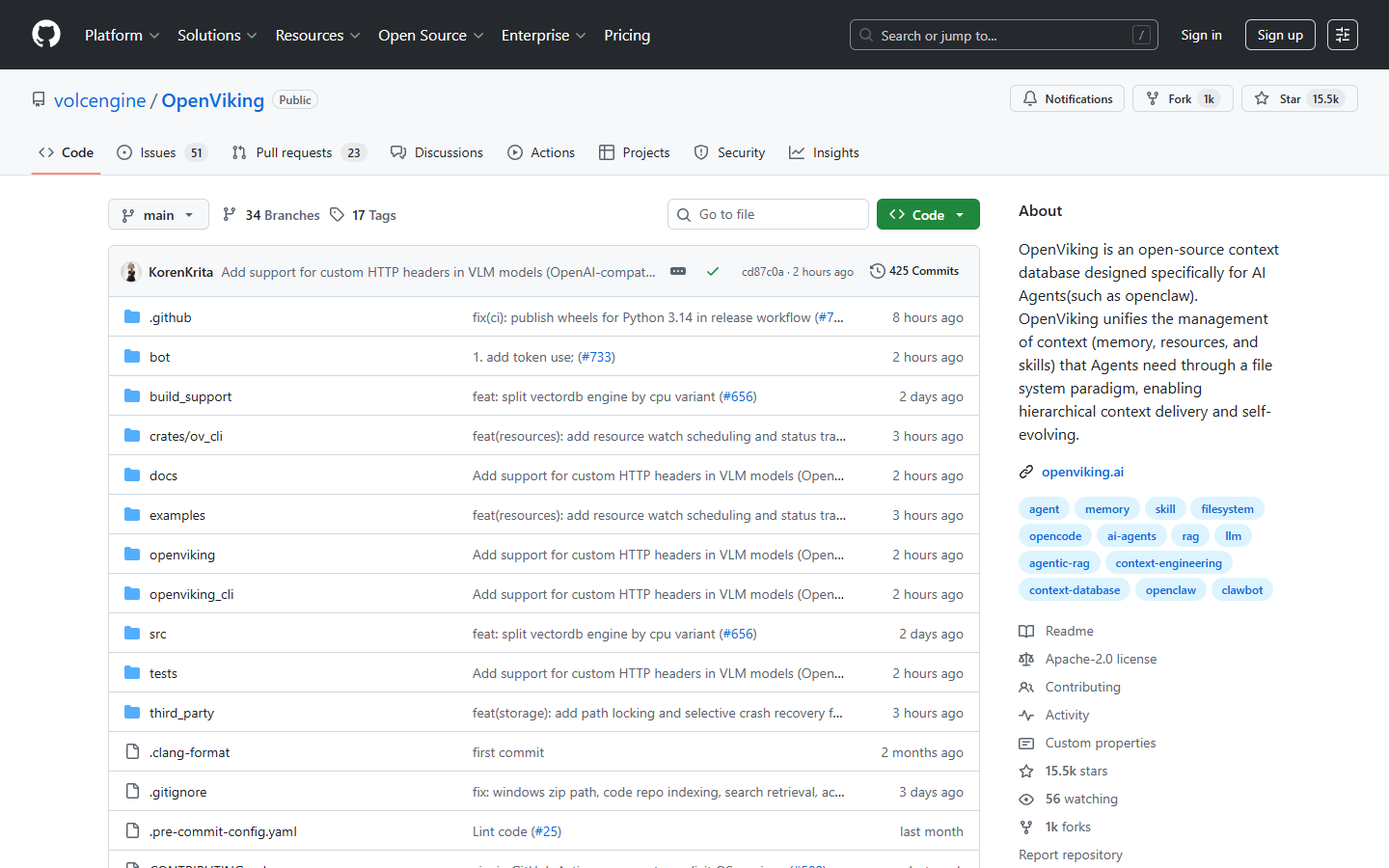

The project was initiated and is maintained by the Viking team at Volcano Engine, ByteDance's cloud division. Open-sourced in January 2026, it has rapidly accumulated over 15,000 GitHub stars and approximately 1,000 forks. This rapid adoption is partly explained by its native compatibility with OpenClaw, the open-source AI agent framework that has taken China and the rest of the world by storm in recent weeks, with integrations launched by Tencent, Alibaba, Xiaomi, and other tech giants.

OpenViking's mission is clear: unify the management of all context an AI agent needs (memory, resources, and skills) into a single structured, observable, and evolvable infrastructure.

The Filesystem Paradigm: Organizing Context Like Files

OpenViking's central innovation is treating AI agent context exactly like a filesystem on a computer. Instead of storing text chunks in a flat vector space, OpenViking organizes all information into a hierarchy of directories accessible through a dedicated protocol: viking://.

The Directory Structure

OpenViking's virtual filesystem is organized around three root directories:

viking://resources/ contains the raw data the agent works with: PDF documents, codebases, images, technical specifications. This is the working material.

viking://user/ stores everything about the user: preferences, interaction history, personal context. If you tell your agent you prefer Python over Java, that preference gets saved to viking://user/memories/preferences and will be available in future sessions.

viking://agent/ houses the agent's own skills and operational experience. Skills like "search code" or "generate image" are stored as files in this directory tree.

Familiar Operations

The agent interacts with this filesystem through standard operations: ls to list directory contents, find to search for an element, read to open a file, overview to get a directory summary. This familiarity is not trivial. It transforms the agent from a "probabilistic guesser" into a "deterministic navigator." Instead of asking "give me anything that vaguely resembles X," the agent can methodically browse its own brain.

OpenViking's minimal API reflects this philosophy: client.add_resource(path) to add resources, client.search(query) to search, client.read(uri) to read, client.ls(uri) to browse a directory. A few calls are enough to integrate the system into an existing workflow, including popular frameworks like LangChain.

Tiered Context Loading L0/L1/L2: The Intelligence of Parsimony

OpenViking's second technical pillar is its tiered context loading system. Every piece of context is automatically processed into three levels of detail on write:

L0 (Abstract): a one-sentence summary, under 100 tokens. It is the equivalent of an enriched file title with minimal description. Enough for the agent to know if the information is relevant without wasting tokens.

L1 (Overview): a summary containing essential information, structure, and usage scenarios. Under 2,000 tokens. For a coding project, this would be the equivalent of the README file or API summary. This level is sufficient for most planning decisions.

L2 (Detail): the complete content, loaded only on demand via its URI. This is the deep read, reserved for the moment the agent actually needs to dive into the details.

The Impact on Token Consumption

The results published by the ByteDance team on the LoCoMo10 dataset are striking. Combining OpenClaw with OpenViking raises the task completion rate from 35.65% to 52.08%, while input token consumption drops from 24.6 million to 4.3 million, a reduction of over 80%. With the memory-core option enabled, consumption drops further to 2.1 million tokens for a similar completion rate.

This tiered approach means the agent first "skims" its context at the L0 level, refines its understanding at L1 for planning, and only loads the full L2 content when it genuinely needs it for task execution. This eliminates the classic problem of context windows saturated with irrelevant noise.

Directory Recursive Retrieval: Search That Understands Structure

OpenViking's third pillar is its retrieval strategy, called Directory Recursive Retrieval. It addresses a fundamental weakness of standard vector search.

In a traditional RAG system, when you ask a complex question like "how is the authentication module architected?", the system performs a semantic similarity search and returns a set of text fragments deemed relevant. You get a comment from the code, a line from the README, and a sentence from an email. Three disconnected fragments with no hierarchical context.

OpenViking works differently in three steps:

Intent analysis: the system first breaks down what you are actually looking for.

Initial positioning: vector search is used not to find a paragraph, but to identify the most relevant directory. The system looks for the right folder first, not the right fragment.

Recursive exploration: once the directory is identified (for example, viking://resources/code/auth/), the system explores its contents recursively, using both semantic search and navigation operations (glob, grep) to locate information with surgical precision.

The analogy is straightforward: instead of teleporting into a random room that "looks like" what you are searching for, the agent enters through the front door and walks through the house room by room, understanding the structure of the whole building.

Observability: The End of the Black Box

A rarely mentioned but crucial problem with traditional RAG systems is debugging. When an agent gives a wrong answer, it is often impossible to understand why. Which fragment was retrieved? Why that one instead of another? Is the embedding model at fault, or the chunking strategy?

OpenViking solves this through its visualized retrieval trajectory. Since the retrieval process involves navigating directories and positioning files, the system can log every step of the journey. You can examine a log and see exactly: "the agent went to viking://resources/, then to project-a/, then opened specs.md at L1, then loaded the L2 of auth-module.md."

This transparency fundamentally changes the relationship between developer and context system. You move from an impenetrable black box to a system whose behavior you can observe, understand, and optimize.

Comparison: OpenViking vs. Existing Solutions

To position OpenViking in the current landscape, here is a comparison with alternative approaches to context management for AI agents:

Criterion | Classic vector RAG | Knowledge graphs | OpenViking |

|---|---|---|---|

Context structure | Flat chunks, similarity search | Entities and relationships in a graph | File hierarchy (viking://) |

Retrieval strategy | One-shot semantic search | Graph traversal | Directory Recursive Retrieval |

Token consumption | High (full chunks every time) | Moderate | Optimized (L0/L1/L2 loading) |

Observability | Black box | Partial (graph visualization) | Full retrieval trajectory |

Persistent memory | Limited (chat history) | Possible but complex | Native (viking://user/ and viking://agent/) |

Automatic evolution | No | No | Yes (session-based self-evolution) |

Learning curve | Low | High | Moderate (familiar filesystem paradigm) |

Agent integration | Generic | Specific | Built for agents (OpenClaw compatible) |

Self-Evolution: An Agent That Learns from Every Session

Perhaps OpenViking's most forward-looking feature is its self-evolution capability. The system does not just read files; it writes them too. At the end of each session, OpenViking triggers a memory extraction process that analyzes task results and user interactions.

If you state a preference, it gets compressed and saved to viking://user/memories/. If the agent discovers a particularly effective method for solving a problem, or fails with a specific tool, it extracts that operational lesson and updates its own viking://agent/skills/ directory.

In practice, this means your Tuesday agent is better than your Monday agent. Not because the model weights changed, but because its context database, its "brain," has become richer and more relevant. The analogy often used is the difference between a temp worker who forgets everything at 5 PM and an employee who keeps a detailed journal of what works and what does not.

This capability also raises questions. If an agent can rewrite its own skills directory, it is essentially reprogramming itself based on experience. This is a powerful mechanism, but one that requires careful governance in professional settings.

Technical Architecture and Installation

Under the hood, OpenViking is primarily written in Python (roughly 78% of the codebase), with high-performance components in C++ (roughly 21%). The architecture is modular, with separate modules for storage, retrieval, parsing, and session management.

Prerequisites

Installation requires Python 3.10 or higher, Go 1.22 or higher, and GCC 9+ or Clang 11+. The system runs on Linux, macOS, and Windows. Basic installation is done via pip:

pip install openviking --upgrade --force-reinstallAn optional Rust CLI called ov_cli can be installed separately via script or built with Cargo.

Required Models

OpenViking requires two types of models to function: a VLM (Vision Language Model) for understanding images and multimodal content in your resources, and an embedding model for vectorization and semantic retrieval.

Supported providers include OpenAI (GPT-4 Vision, text-embedding-3-large), Volcengine (Doubao models), and LiteLLM for compatibility with other providers. Configuration is handled through an ov.conf file where you specify the provider and API keys. Switching between providers is trivial: just change the configuration string.

The OpenClaw Ecosystem and China's Agentic AI Boom

OpenViking cannot be understood in isolation from the ecosystem it belongs to. The project is designed to work with OpenClaw, the open-source AI agent framework created by Austrian developer Peter Steinberger (since hired by OpenAI), which became a phenomenon in China in early 2026.

OpenClaw is an "agentic harness" that connects a language model to local tools (email, calendar, browser, terminal) through messaging platforms like Telegram or WhatsApp. The framework has been adopted massively by Chinese tech giants: Tencent launched QClaw integrated with WeChat, ByteDance deployed ArkClaw through Volcano Engine, Alibaba created JVS Claw, and Xiaomi is testing MiClaw for smart device control.

Within this booming ecosystem, OpenViking serves as the "persistent brain" for these agents. Where OpenClaw provides the orchestration structure and tool connections, OpenViking provides structured memory, context management, and learning across sessions.

What This Means for the Future of AI Agents

OpenViking's approach represents a maturity shift in agentic AI development. We are leaving the chatbot era (which merely reacts to the last thing said) and entering the agent era (which can plan, remember, and execute over time).

Building a truly autonomous agent requires persistence and structure. You cannot build a skyscraper on loose gravel. OpenViking's filesystem paradigm, with its viking:// protocol, provides the architectural blueprint that AI agent memory has been missing.

According to Gartner, 40% of enterprise applications will embed task-specific AI agents by the end of 2026, up from less than 5% in 2025. If this projection holds, context management will become a central concern. Solutions like OpenViking, which offer a structured approach rather than simple vector storage, could become the norm rather than the exception.

The project is still young (version 0.2.1 at the time of writing), and the ByteDance team plans a second phase focused on a plugin ecosystem, third-party extensions, and integration with other AI frameworks. It is a project worth watching closely for anyone building or planning to build AI agent systems.

Klare, transparente Preise ohne versteckte Kosten.

Keine Verpflichtung, Preise, die Ihnen helfen, Ihre Akquise zu steigern.

Credits(optional)

Sie benötigen keine Credits, wenn Sie nur E-Mails senden oder auf LinkedIn-Aktionen ausführen möchten

Können verwendet werden für:

E-Mails finden

KI-Aktion

Nummern finden

E-Mails verifizieren

€19pro Monat

1,000

5,000

10,000

50,000

100,000

1,000 Gefundene E-Mails

1,000 KI-Aktionen

20 Nummern

4,000 Verifizierungen

€19pro Monat

Entdecken Sie andere Artikel, die Sie interessieren könnten!

Alle Artikel ansehenBlog

Veröffentlicht am 5. Apr. 2025

FullEnrich: Bewertungen, Preise und Alternativen, um böse Überraschungen zu vermeiden

Mathieu Co-founder

Mathieu Co-founderWeiterlesen

Software

Veröffentlicht am 11. Juli 2024

7 Alternativen zu Expandi, um Ihre Akquisitionskosten zu senken

Marie Head Of Sales

Marie Head Of SalesWeiterlesen

Software

Veröffentlicht am 22. Apr. 2024

Die 5 besten Alternativen zu Dropcontact für eine bessere B2B-Kundenakquise

Marie Head Of Sales

Marie Head Of SalesWeiterlesen

Software

Veröffentlicht am 14. Juli 2024

6 Alternativen zu Skylead, um Kosten zu sparen und Ihre Lead-Generierung zu verbessern

Marie Head Of Sales

Marie Head Of SalesWeiterlesen

Software

Veröffentlicht am 31. März 2025

9 Alternativen zu UpLead, um Ihre Kundenakquise WIRKLICH anzukurbeln

Niels Co-founder

Niels Co-founderWeiterlesen

Software

Veröffentlicht am 26. Apr. 2024

Email Finder 2026: Die 9 besten Hunter.io-Alternativen

Marie Head Of Sales

Marie Head Of SalesWeiterlesen

Made with ❤ for Growth Marketers by Growth Marketers

Copyright © 2026 Emelia All Rights Reserved