Voltar ao hub

Blog

IA

DeepSeek V4: Complete Guide to the 1T AI Model

Publicado em 10 de mar. de 2026Atualizado em 18 de mar. de 2026

At Emelia, our B2B prospecting platform, we integrate artificial intelligence models at the core of our features, from automated cold email writing to data enrichment. At Bridgers, our digital and AI agency, we help businesses implement custom AI solutions. And with Maylee, our AI-native email client, we push the boundaries of productivity through artificial intelligence. When a model the size of DeepSeek V4 appears on the horizon, with 1 trillion parameters and native multimodal capabilities, we consider it essential to provide a thorough analysis of what this means for professionals and businesses.

What Is DeepSeek V4?

DeepSeek V4 is the next major large language model developed by DeepSeek, the Chinese AI laboratory that shook the industry in 2026 with its V3 model and the DeepSeek-R1 series. If the information circulating in the community proves accurate, V4 represents a generational leap on multiple fronts.

The model is said to be built on a Mixture-of-Experts (MoE) architecture totaling approximately 1 trillion parameters, roughly 50% more than V3's 671 billion. But one of the key innovations lies in efficiency: only about 32 billion parameters are active per generated token, down from 37 billion in V3. In other words, DeepSeek V4 would be both more powerful and lighter at inference time.

The architecture combines several key innovations:

MoE (Mixture-of-Experts): activates only a fraction of parameters per query, dramatically reducing compute costs.

MLA (Multi-head Latent Attention): an optimized version of multi-head attention, already present in V3.

Engram Memory: a conditional memory system, documented in a research paper published on January 12, 2026 (arXiv:2601.07372), enabling the model to store and recall information more efficiently.

DSA (Dynamic Sparse Attention): a dynamic sparse attention mechanism that enables the massive context window.

And that context window may be the most dramatic change for end users: it jumps from 128,000 tokens (V3) to 1 million tokens. This is not just a marketing claim. Since February 11, 2026, DeepSeek has silently expanded the context window on its existing API to 1 million tokens, suggesting the technology is already running in production.

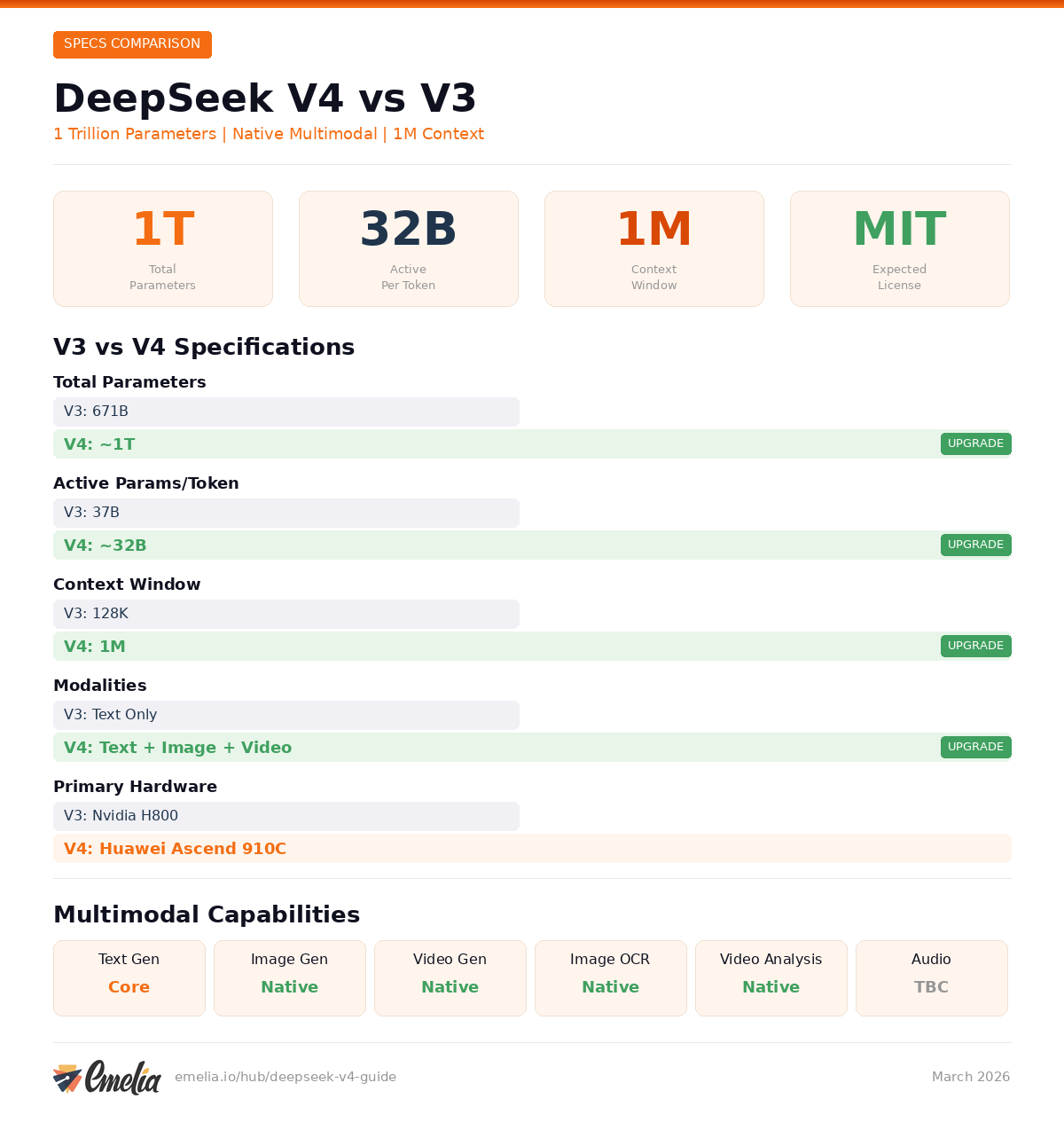

What's New Compared to DeepSeek V3?

To measure the scale of the upgrade, here is a direct comparison:

Specification | DeepSeek V3 | DeepSeek V4 (Expected) |

|---|---|---|

Total Parameters | 671 billion | ~1 trillion |

Active Parameters per Token | 37 billion | ~32 billion |

Context Window | 128,000 tokens | 1,000,000 tokens |

Modalities | Text only | Text + Image + Video |

Primary Hardware | Nvidia H800 | Huawei Ascend 910C |

License | MIT | MIT (expected) |

Three elements stand out. First, the reduction in active parameters despite the massive increase in total parameters reflects remarkable optimization work. Second, the jump to 1 million tokens of context places V4 in a category of its own: it becomes possible to process entire documents, complete codebases, or research corpora in a single query. Third, the shift to native multimodal fundamentally transforms the nature of the model.

What Are DeepSeek V4's Multimodal Capabilities?

Unlike V3, which was limited to text, DeepSeek V4 is designed from the ground up as a multimodal model. Here is a breakdown of expected capabilities:

Capability | Description | Status |

|---|---|---|

Text Generation | Writing, code, analysis, translation | Confirmed |

Image Understanding | Visual analysis, OCR, visual Q&A | Confirmed |

Image Generation | Text-to-image, design assistance | Confirmed |

Video Understanding | Summarization, video content analysis | Confirmed |

Video Generation | Short animated sequences | Confirmed |

Audio Processing | Transcription, voice analysis | Unconfirmed |

The fact that these capabilities are native, not bolted on after the fact, is a crucial point. The most performant multimodal models are generally those where different modalities were integrated during training, rather than added through supplementary modules. This suggests a deeper understanding of the relationships between text, image, and video.

Benchmark Leaks and Performance: What Do We Know?

No official benchmarks have been published, but internal leaks have circulated in the community:

HumanEval (code evaluation): 90%, a score that would place V4 above most competing models.

SWE-bench (real software bug resolution): above 80%, suggesting practical capabilities in software engineering.

Scores on MMLU-Pro and GPQA Diamond have also leaked but remain unconfirmed.

According to these same leaks, DeepSeek V4 would outperform Claude and GPT on programming tasks. This remains to be verified with independent benchmarks, but DeepSeek's trajectory, where V3 had already surprised the industry, makes these figures plausible.

The Huawei Angle: AI Independence from Nvidia

One of the most strategic aspects of DeepSeek V4 concerns hardware. While V3 was trained on Nvidia H800 GPUs, V4 marks a turning point: the model is reportedly optimized to run on Huawei Ascend 910B and 910C chips.

DeepSeek allegedly received early access to Huawei chips, a privilege not extended to Nvidia or AMD. If confirmed, DeepSeek V4 would become the first trillion-parameter model optimized entirely outside the Nvidia ecosystem.

The implications are significant. For the Chinese AI ecosystem, it demonstrates that training and deploying state-of-the-art models is possible without depending on American semiconductor exports. For international businesses, it means a credible alternative to Nvidia infrastructure is beginning to emerge, even though chip-for-chip performance still favors Nvidia for now.

In practice, DeepSeek reportedly used Nvidia chips for training and relegated Huawei chips to inference. A pragmatic approach that could evolve with the next generation of Ascend chips.

Business Use Cases and Applications for DeepSeek V4

This is where DeepSeek V4 becomes concretely interesting for professionals. Here are the most promising usage scenarios.

Enterprise Coding Assistant

With a 90% score on HumanEval and a 1 million token context window, V4 could analyze entire codebases in a single query. For a development team, this means the ability to submit a complete GitHub repository and request a code review, refactoring suggestions, or vulnerability detection across the entire project. Not file by file, but the project as a whole.

Consider a SaaS company maintaining a monorepo with 200,000 lines of code. Today, AI-assisted code review requires slicing the codebase into chunks, losing cross-file context in the process. With a 1 million token window, V4 could ingest the entire repository, understand how services interact, and flag architectural issues that span multiple modules. For teams practicing continuous integration, this could dramatically reduce the time between pull request and merge.

Large-Scale Document Analysis

The 1 million token window allows loading massive documents: complete annual reports, contracts spanning hundreds of pages, regulatory filings. A law firm could submit an entire litigation file and ask the model to identify every clause related to liability limitations or indemnification, cross-referencing across dozens of exhibits and amendments. A financial analyst could load four consecutive quarterly reports from a public company and request a trend analysis of operating margins, cash flow patterns, and management commentary shifts.

For compliance teams, the ability to process an entire regulatory framework alongside internal policy documents in a single query could transform audit preparation from a weeks-long manual effort into a matter of hours.

Multimodal Content Creation

For marketing teams and content creators, native multimodal capabilities open unprecedented possibilities. Imagine a tool capable of simultaneously generating article text, accompanying illustrations, and a short promotional video, all coherent and aligned with a single brief.

A practical example: a product marketing manager preparing a launch campaign could feed the model a product spec sheet and brand guidelines, then request a blog post draft, three social media visual variants, and a 15-second teaser animation, all maintaining visual and tonal consistency. While each individual output would still need human review and refinement, the time savings on the first draft phase could be substantial.

Cost-Effective Alternative to GPT and Claude APIs

DeepSeek has positioned itself as a significantly cheaper alternative to OpenAI and Anthropic models. If V4 maintains this pricing strategy (V3 was trained for approximately $5.6 million), businesses that consume API calls at scale could achieve substantial savings without sacrificing quality.

For startups and mid-size companies running AI-powered features, API costs are a real concern. A company processing 10 million tokens per day through GPT-4 class models can easily spend $10,000 or more per month. If DeepSeek V4 offers comparable quality at even half the price, the annual savings would be significant enough to fund additional engineering headcount.

Data Sovereignty and Self-Hosting

Under an MIT license, V4 would be fully self-hostable. For businesses subject to strict regulatory constraints (healthcare, defense, finance), this is a major advantage: no data leaves your servers. It is also a benefit for European companies seeking GDPR compliance without relying on American providers.

A European fintech processing sensitive financial data, for instance, could deploy V4 on its own infrastructure and use it for customer communication analysis, fraud detection, or regulatory document processing, all without sending a single byte of customer data to a third-party API. For healthcare organizations subject to HIPAA in the US or equivalent regulations in Europe, this self-hosted approach removes one of the biggest barriers to AI adoption.

Chinese Market Optimization

With high scores on C-Eval benchmarks, V4 is a natural choice for businesses targeting the Chinese market. The understanding of linguistic and cultural nuances surpasses what Western models can offer. For international companies expanding into China, this means better customer support automation, more natural marketing copy in Mandarin, and more accurate analysis of Chinese-language social media sentiment and competitive intelligence.

How Much Does It Cost to Self-Host DeepSeek V4?

Self-hosting a trillion-parameter model is no small undertaking. Here are the estimates:

FP16 (full precision): approximately 2 TB of VRAM, requiring multiple A100 or H100 GPUs in a cluster.

Q4_K_M quantization: approximately 500 GB, which is still substantial.

Minimum configuration: a multi-node setup or 8-bit quantization on 4x RTX 4090 could work, but with trade-offs in inference speed.

For a business, this represents a hardware investment in the range of $50,000 to $200,000 depending on configuration, not counting electricity and maintenance costs. The most accessible alternative remains using the DeepSeek API, which has historically offered very competitive pricing.

When Is DeepSeek V4 Releasing?

This is the million-token question. The timeline of events is revealing:

January 12, 2026: Publication of the Engram memory paper, considered a foundational building block of V4.

January 2026: Code reference leaked under the name "MODEL1" on GitHub.

February 11, 2026: Silent expansion of the context window to 1 million tokens on the existing API.

February 17, 2026: Expected announcement date per community speculation. Nothing happened.

March 3, 2026: Rumored launch date, coinciding with the Two Sessions (a major political event in China). Still nothing.

March 5, 2026: OpenAI launches GPT-5.4.

March 10, 2026: Still no official release.

Several hypotheses are circulating to explain the delay. The most likely: the launch of GPT-5.4 by OpenAI on March 5 may have pushed DeepSeek to recalibrate its benchmarks to ensure V4 can be positioned as a credible competitor to OpenAI's new model. Others point to optimization difficulties related to Huawei chips, or simply an internal schedule that never matched community expectations.

Limitations and What We Don't Know Yet

It would be irresponsible to present only the positive aspects. Here is what we do not yet know, or what could prove problematic.

No independent benchmarks. All cited performance figures come from internal leaks. Until independent evaluations are conducted, these numbers should be taken with caution.

Built-in censorship. Like all Chinese models, DeepSeek is subject to Chinese government regulations. API-accessible versions may refuse to answer certain questions deemed sensitive. Self-hosting the open-source model would mitigate this issue but not eliminate it entirely, as bias can be embedded in training data.

Multimodal remains unproven. The announced image and video generation capabilities have not been publicly demonstrated. Actual quality could fall short of expectations, especially against specialized models like DALL-E 3, Midjourney, or Sora.

Inference cost at scale. Even though training costs are low, the inference cost for a trillion-parameter model, even with an efficient MoE, remains unknown. API pricing has not been announced.

Huawei chip dependency. While Huawei optimization is a strategic asset, it also presents a risk. Ascend chips do not have the same software ecosystem as Nvidia (CUDA), which could complicate deployments for businesses accustomed to the Nvidia ecosystem.

Who Should Care About DeepSeek V4?

You should pay attention if:

You are a developer or technical team looking for a high-performance, self-hostable code model.

You handle large volumes of documents and need a massive context window.

You are looking for a more affordable alternative to GPT and Claude for your API needs.

You have data sovereignty requirements and want an open-source model you can host internally.

You operate in the Chinese market and need a model that understands local cultural nuances.

You are an AI enthusiast who wants to understand the latest developments in the field.

You can wait if:

You already use GPT-5.4 or Claude and are satisfied, at least until independent benchmarks become available.

You lack the infrastructure to self-host a model of this size and prefer established SaaS solutions.

You need enterprise stability and support guarantees that only OpenAI and Anthropic offer today.

Your use cases do not require a massive context window or multimodal capabilities.

Conclusion: DeepSeek V4 Is a Model to Watch Closely

DeepSeek V4 is shaping up to be one of the most ambitious AI models of 2026. With 1 trillion parameters, a 1 million token context window, native multimodal capabilities, and an open-source license, it checks every box that matters for businesses and developers.

But it has not been released yet. And until it is, all we have are leaks, rumors, and signals. DeepSeek's track record, having consistently surprised the industry with V3 and R1, lends credibility to these promises. The geopolitical context and competition with GPT-5.4 add an extra layer of suspense.

What is certain is that when DeepSeek V4 does become available, it will be one of the most important models to evaluate for anyone using AI in a professional context. We will not hesitate to test it thoroughly as soon as it launches.

Preços claros, transparentes e sem custos ocultos.

Sem compromisso, preços para ajudá-lo a aumentar sua prospecção.

Créditos(opcional)

Você não precisa de créditos se você quiser apenas enviar e-mails ou fazer ações no LinkedIn

Podem ser usados para:

Encontrar E-mails

Ação de IA

Encontrar Números

Verificar E-mails

€19por mês

1,000

5,000

10,000

50,000

100,000

1,000 E-mails encontrados

1,000 Ações de IA

20 Números

4,000 Verificações

€19por mês

Descubra outros artigos que podem lhe interessar!

Ver todos os artigosSoftware

Publicado em 6 de jul. de 2025

Lead411 vs Lusha vs Emelia : o duelo definitivo entre as ferramentas de prospecção B2B

Mathieu Co-founder

Mathieu Co-founderLeia mais

Software

Publicado em 1 de jul. de 2025

Lusha vs Waalaxy vs Emelia: quem dominará em 2026?

Niels Co-founder

Niels Co-founderLeia mais

Software

Publicado em 25 de mai. de 2025

Os 15 melhores softwares gratuitos para substituir o Photoshop

Mathieu Co-founder

Mathieu Co-founderLeia mais

Software

Publicado em 15 de mai. de 2025

6 melhores softwares de edição de vídeo gratuitos: conteúdos profissionais gratuitos

Mathieu Co-founder

Mathieu Co-founderLeia mais

Software

Publicado em 7 de ago. de 2024

Lemlist vs Waalaxy 2026: qual ferramenta LinkedIn escolher?

Marie Head Of Sales

Marie Head Of SalesLeia mais

Software

Publicado em 2 de mai. de 2024

7 melhores alternativas ao Lemlist: o guia definitivo de 2026

Marie Head Of Sales

Marie Head Of SalesLeia mais

Links úteis

HubCold-email: Guia CompletoEntregabilidade: Guia completoAlternativa ao LemlistAPISolicitar uma demonstraçãoPrograma de afiliadosFind emailMade with ❤ for Growth Marketers by Growth Marketers

Copyright © 2026 Emelia All Rights Reserved