Back to hub

Blog

AI

Promptfoo: Test and Secure Your AI Agents (The Startup OpenAI Just Acquired)

Published on Mar 12, 2026Updated on May 27, 2026

At Emelia, artificial intelligence powers our B2B prospecting platform: automated cold email writing, data enrichment, lead scoring. At Bridgers, we design and deploy AI agents for clients, from support chatbots to complex workflow automation. In both cases, one question comes up every time before going to production: how do you make sure these AI systems won't hallucinate, leak sensitive data, or get manipulated by a malicious prompt? That's exactly the problem Promptfoo solves. And that's exactly why OpenAI just acquired it.

On March 9, 2026, OpenAI announced the acquisition of Promptfoo, the world's most widely used open-source platform for testing and red-teaming AI applications. With over 350,000 developers, 130,000 monthly active users, and adoption by more than 25% of Fortune 500 companies, Promptfoo has become the go-to tool for evaluating, testing, and securing LLM-based applications in just two years. The deal values the startup at $86 million, and its integration into OpenAI Frontier, the enterprise AI agent platform launched on February 5, 2026, is already planned.

But beyond the acquisition news, Promptfoo is first and foremost a powerful tool that every developer working with LLMs should know about. This comprehensive guide covers what Promptfoo does, how to integrate it into your workflow, and what this acquisition actually changes for technical teams.

What is Promptfoo: Testing Your Prompts and AI Agents Before Production

Promptfoo is an open-source CLI (command-line interface) and library, licensed under MIT, designed for systematic evaluation and testing of LLM-based applications. Founded in 2026 by Ian Webster (CEO) and Michael D'Angelo (CTO), the tool was born from a simple observation: developers building AI applications rely on trial and error. They tweak a prompt, test manually, and hope for the best. Promptfoo replaces this ad-hoc approach with test-driven development for LLMs.

In practice, Promptfoo lets you:

Evaluate prompts: compare side-by-side responses from different prompts and different models (GPT, Claude, Gemini, Llama, Mistral) against the same test cases

Score outputs automatically: define quality metrics (relevance, coherence, toxicity detection) and let Promptfoo grade each response

Test security via red teaming: simulate adversarial attacks to identify vulnerabilities before deployment

Compare models: benchmark GPT-4, Claude 3, Gemini, Llama 3, or any other model side-by-side through a single declarative configuration

Automate testing in CI/CD: integrate evaluations directly into GitHub Actions, GitLab CI, or Jenkins

The tool runs entirely locally. Your prompts and data never leave your machine, a major selling point for privacy-conscious organizations. Configuration is handled through a simple YAML file (promptfooconfig.yaml), and results appear in an interactive web viewer or on the command line.

Ian Webster summarizes the philosophy: "We founded Promptfoo in 2026 to make it easy for developers to systematically test their AI applications. We quickly realized that adversarial tests for security, safety, and other behavioral risks were the biggest blockers to shipping AI, especially at large enterprises."

Red Teaming, Prompt Injection, Data Leaks: What Promptfoo Actually Detects

The core of Promptfoo's value proposition is its red-teaming engine. The tool tests for over 50 vulnerability types specific to AI applications. Here's what that covers in practice.

Prompt Injection and Jailbreaking

Promptfoo automatically generates adversarial inputs that attempt to bypass your AI system's guardrails. It simulates attacks that try to override system instructions, extract the original prompt, or make the model behave in unintended ways. This includes indirect injections via context (for example, in a RAG system), SQL injections via prompt-to-SQL, and attempts to execute unauthorized shell commands.

Data Leak and PII Detection

The tool tests whether your application can be tricked into revealing personally identifiable information (PII), confidential customer data, or internal information. The pii:direct, pii:indirect, and pii:social plugins cover direct disclosures, cross-referencing deductions, and social engineering respectively.

Tool Misuse Detection

For AI agents with access to external tools (APIs, databases, payment systems), Promptfoo verifies that the model respects role-based access controls (RBAC) and doesn't access unauthorized functionality.

Compliance Monitoring

Promptfoo aligns with established frameworks: the OWASP Top 10 for LLMs and the NIST AI Risk Management Framework. Generated reports quantify risks and provide remediation recommendations.

Toxic Behavior and Bias

The harmful plugins detect problematic outputs: misinformation, hate speech, copyright violations, unauthorized medical or legal advice, and discriminatory bias.

Configuration is declarative and modular. A single YAML file is all you need to define which plugins to activate and which attack strategies to use:

```yaml redteam: plugins:

'harmful'

'pii:direct'

'pii:social'

'rbac'

'competitors'

strategies:

'prompt-injection'

'jailbreak'

```

Setting Up Promptfoo in Your CI/CD: Step-by-Step Guide with GitHub Actions

One of Promptfoo's strongest advantages is its native integration with CI/CD pipelines. You can automate both quality testing (prompt evaluations) and security scanning (red teaming) on every pull request or on a defined schedule.

Automatic Evaluation on Every PR

Here's a GitHub Actions configuration that triggers an evaluation whenever your prompts are modified:

```yaml name: LLM Eval on: pull_request: paths:

'prompts/**'

'promptfooconfig.yaml'

jobs: evaluate: runs-on: ubuntu-latest steps:

uses: actions/checkout@v4

uses: actions/setup-node@v4

with: node-version: '22'

name: Run eval

env: OPENAI_API_KEY: ${{ secrets.OPENAI_API_KEY }} run: | npx promptfoo@latest eval \ -c promptfooconfig.yaml \ --share \ -o results.json

name: Check quality gate

run: | FAILURES=$(jq '.results.stats.failures' results.json) if [ "$FAILURES" -gt 0 ]; then echo "Eval failed with $FAILURES failures" exit 1 fi ```

Daily Security Scan

For continuous red teaming, you can set up an automated daily scan:

```yaml name: Security Scan on: schedule:

cron: '0 2 *'

jobs: red-team: runs-on: ubuntu-latest steps:

uses: actions/checkout@v4

name: Run red team scan

uses: promptfoo/promptfoo-action@v1 with: type: 'redteam' config: 'promptfooconfig.yaml' openai-api-key: ${{ secrets.OPENAI_API_KEY }} github-token: ${{ secrets.GITHUB_TOKEN }} ```

The official GitHub Action (promptfoo/promptfoo-action@v1) automatically posts a comment on the PR with results, including a before/after comparison when you modify a prompt. A built-in caching system reuses previous LLM call results to reduce costs.

Multi-Model Testing

Promptfoo also lets you test the same prompt across multiple models in parallel using a GitHub Actions matrix:

```yaml strategy: matrix: model: [gpt-4, claude-3-opus, gemini-pro] steps:

name: Test ${{ matrix.model }}

run: | npx promptfoo@latest eval \ --providers.0.config.model=${{ matrix.model }} \ -o results-${{ matrix.model }}.json ```

OpenAI Acquires Promptfoo for $86M: Why Now?

The March 9, 2026 announcement didn't come out of nowhere. Promptfoo had raised $5 million in seed funding (2026, led by Andreessen Horowitz), then $18.4 million in a Series A (July 2026, led by Insight Partners with participation from a16z), totaling $23 million raised with a post-money valuation of $86 million.

Integration into OpenAI Frontier

The strategic rationale is clear. OpenAI launched Frontier on February 5, 2026, an enterprise platform for building, deploying, and managing "AI coworkers," autonomous AI agents that interact with production systems, CRMs, databases, and internal applications. Early customers include Uber, State Farm, Intuit, and Thermo Fisher Scientific. Deployment partners include Accenture, Capgemini, and McKinsey.

But autonomous agents accessing payment systems, patient records, or customer data demand rigorous security guarantees. Promptfoo fills exactly that gap by integrating automated red teaming, vulnerability detection, and compliance monitoring directly into the platform.

Srinivas Narayanan, CTO of B2B Applications at OpenAI, confirms: "Promptfoo brings deep engineering expertise in evaluating, securing, and testing AI systems at enterprise scale. We're excited to bring these capabilities directly into Frontier."

The Financial Context

Enterprise security spending is projected to reach $244 billion in 2026. The specific AI infrastructure security segment is growing at 18.8% annually, from $12 billion in 2026 to $14.3 billion in 2026. By integrating Promptfoo into Frontier as a premium feature, OpenAI transforms a one-time evaluation cost into recurring revenue tied to enterprise subscriptions.

The Team

Promptfoo had 23 people (some sources cite 11 engineers) across engineering, go-to-market, and operations. The full team joins OpenAI to continue development, and OpenAI has committed to maintaining the open-source project.

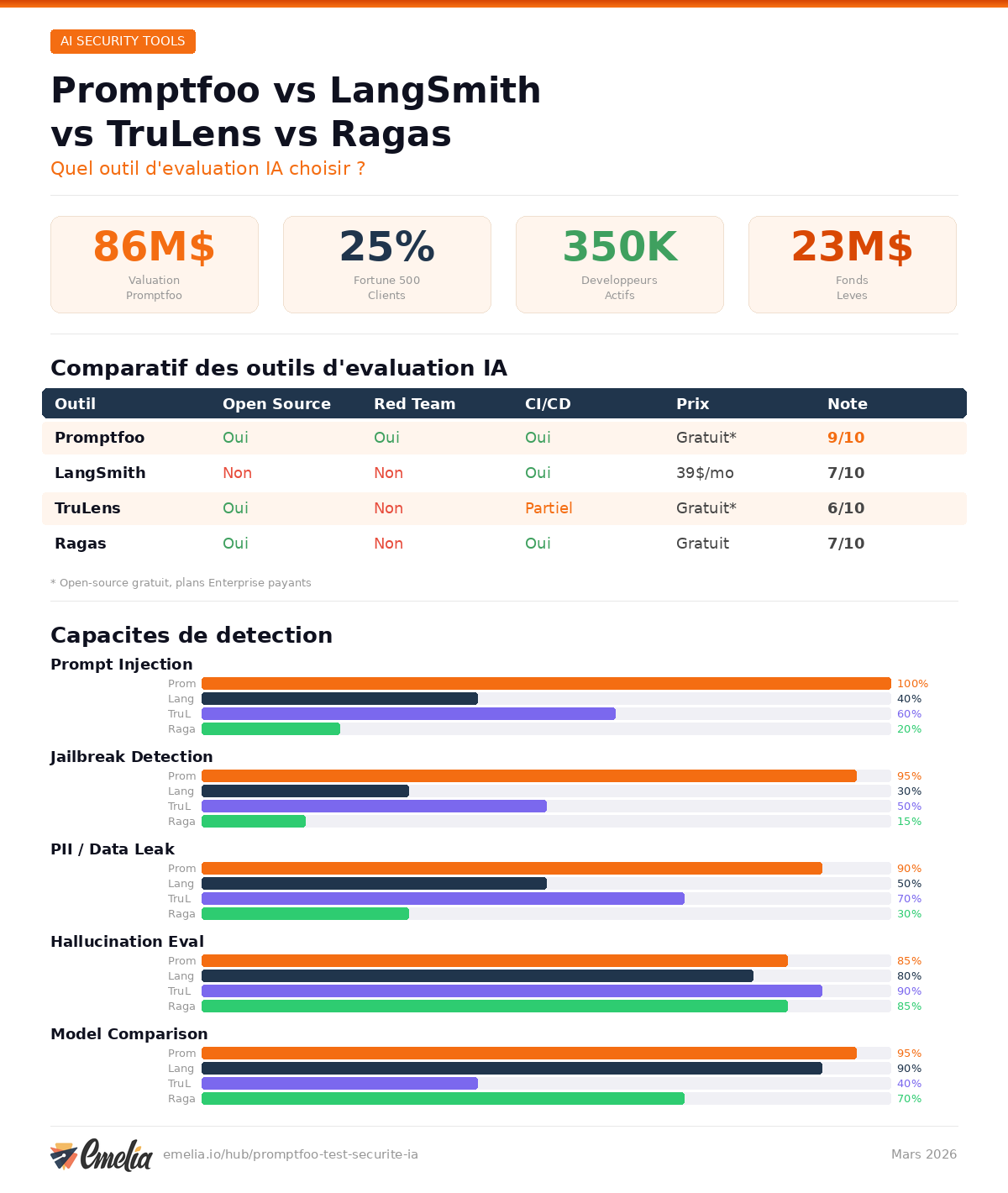

Promptfoo vs LangSmith vs TruLens vs Ragas: Which AI Evaluation Tool Should You Choose?

The market for LLM application evaluation and testing is booming. Here's how Promptfoo stacks up against its main competitors.

Criteria | Promptfoo | LangSmith | TruLens | Ragas |

|---|---|---|---|---|

Primary focus | Red teaming + security evaluation | Tracing + evaluation (LangChain ecosystem) | Feedback-driven eval (RAG focus) | RAG evaluation (academic metrics) |

Open source | Yes (MIT) | Partially (tracing open, platform closed) | Yes (MIT) | Yes (Apache 2.0) |

Built-in red teaming | Yes, 50+ vulnerability plugins | No | No | No |

CI/CD integration | Native (GitHub Actions, GitLab, Jenkins) | Limited | Limited | No |

Supported models | All (OpenAI, Anthropic, Google, Llama, Mistral, custom) | Primarily LangChain/LangGraph | All via LLM-as-judge | All via LangChain |

Pricing | Free (10k probes/mo), Enterprise on request | Free (5k traces), Plus $39/user/mo, Enterprise on request | Free (open source), cloud paid | Free (open source) |

Local execution | Yes, 100% local | Cloud (self-host in Enterprise) | Self-host possible | Local |

RAG specialty | Dedicated plugins | Yes, native | RAG Triad (industry benchmark) | Industry-standard metrics |

Best for | Security, compliance, red teaming | LangChain teams, debugging | RAG quality evaluation | Research, RAG metrics |

The verdict: if your priority is securing and ensuring compliance of your AI applications, Promptfoo has no direct equivalent. LangSmith excels at debugging and tracing within the LangChain ecosystem. TruLens and Ragas are the benchmarks for RAG quality evaluation but don't cover red teaming. In practice, many teams combine Promptfoo (security) with LangSmith or TruLens (observability and quality).

Can You Still Trust Promptfoo Now That OpenAI Owns It?

This is the question lighting up Reddit and the developer community since the announcement. The debate boils down to one sentence: can you trust an AI model evaluation tool when it belongs to one of the AI model providers?

Arguments for Reliability

OpenAI has publicly committed to keeping Promptfoo open source under its current MIT license. The code remains inspectable, modifiable, and redistributable. The tool will continue to support all providers (Anthropic, Google, Meta, Mistral, open-source models). Ian Webster and Michael D'Angelo remain at the helm of the project.

From a technical standpoint, Promptfoo evaluations run locally on your machine. Results are not sent to OpenAI (unless you explicitly opt into the sharing feature). The transparency of open-source code allows anyone to verify the absence of bias in scoring mechanisms.

Arguments for Concern

On Reddit, skepticism runs deep. The main argument: even if the code stays open, the roadmap is now driven by OpenAI. Development priorities, optimizations, new plugins, all of this will naturally be oriented toward the OpenAI ecosystem. A developer testing a Claude or Gemini model with an OpenAI-owned tool has a legitimate question about objectivity.

Others point out that tech industry history tends to validate the skeptics: open-source projects acquired by tech giants often see their community version stagnate while the enterprise version gets all the attention.

The Pragmatic Position

Today, Promptfoo remains the best open-source red-teaming tool for AI applications. As long as the code is open and auditable, the community can verify its integrity. The day biases appear in evaluation mechanisms, the community will spot them in the code. In the meantime, it's wise to track the project's evolution on GitHub and keep an eye on alternatives like DeepEval, PyRIT (Microsoft), or custom in-house testing tools.

Concrete Use Cases: Who Should Use Promptfoo (and Who Can Skip It)

Startup Deploying a Customer Chatbot

You're launching a support chatbot for your SaaS. Before going live, you need to verify it won't leak information about your infrastructure, won't generate toxic responses, and can resist manipulation attempts. Promptfoo lets you run a full red-teaming scan in a single command, identify vulnerabilities, and fix your prompts before going to production. The Community plan (free, 10,000 probes per month) is more than enough for this use case.

Agency Building AI Solutions for Clients

At Bridgers, we deliver AI agents to clients across various industries (finance, healthcare, retail). Each client has different compliance requirements. Promptfoo lets you create custom scan profiles per client and per industry, integrate testing into the CI/CD pipeline, and deliver documented security reports. The Enterprise plan becomes relevant when you're managing multiple clients with collaboration, SSO, and centralized dashboard needs.

Enterprise Securing LLM Pipelines

For a Fortune 500 company deploying dozens of AI agents accessing critical systems, Promptfoo provides continuous monitoring, centralized compliance dashboards, remediation tracking, and alignment with OWASP and NIST. The upcoming integration into OpenAI Frontier promises to further streamline the workflow for teams already using the OpenAI platform.

Who Can Skip It?

If you're using an LLM exclusively for internal tasks like marketing copy generation or document summarization, with no access to sensitive data and no exposure to external users, red teaming isn't your priority. A quality evaluation framework like Ragas or TruLens will be more relevant than Promptfoo. Similarly, if you're entirely within the LangChain ecosystem, LangSmith will offer a more natural integration for debugging and tracing.

Promptfoo's Limitations: What It Doesn't Do

Promptfoo is not a silver bullet. Here's what you need to know before adopting it.

Learning curve: the YAML configuration and plugin logic require an initial investment. The tool is designed for developers comfortable with the command line. Non-technical teams should plan for training time.

No production monitoring: Promptfoo excels at pre-deployment testing and periodic scanning, but it's not a real-time observability tool. For production monitoring, you'll need a complementary tool (LangSmith, Langfuse, Arize Phoenix).

Not a substitute for code testing: as several developers point out on Reddit, Promptfoo tests model outputs, not the quality of your agent's code. Infinite loops, missing exit conditions, orchestration logic errors, those fall under traditional tooling (linters, SAST, SonarQube).

Opaque Enterprise pricing: the Community plan is generous (free, 10k probes/month), but upgrading to Enterprise requires a custom quote with no published pricing tiers.

What the Acquisition Means for Companies Using AI

OpenAI's acquisition of Promptfoo sends a clear signal to the market: AI application security is no longer a nice-to-have, it's foundational infrastructure. When the market leader in LLMs invests $86 million to embed red teaming into its agent platform, it means companies deploying AI agents without testing them are taking on increasing risk.

For teams building with AI, as we do at Emelia for prospecting and at Bridgers for client solutions, the takeaway is clear: integrating security testing into the development workflow is no longer optional. Promptfoo, whether it belongs to OpenAI or not, remains the best starting point to get there today.

The open-source project is available on GitHub. Setup takes one line: npx promptfoo@latest init. The rest is configuration and discipline.

Clear, transparent prices without hidden fees

No commitment, prices to help you increase your prospecting.

Credits(optional)

You don't need credits if you just want to send emails or do actions on LinkedIn

May use it for :

Find Emails

AI Action

Phone Finder

Verify Emails

€19per month

1,000

5,000

10,000

50,000

100,000

1,000 Emails found

1,000 AI Actions

20 Number

4,000 Verify

€19per month

Discover other articles that might interest you !

See all articlesSoftware

Published on Jun 24, 2025

Expandi vs Waalaxy: Find out Which one to Choose

Niels Co-founder

Niels Co-founderRead more

B2B Prospecting

Published on Apr 1, 2025

6 Awesome B2B Data Providers: Your Guide to Fresh, Actionable Data in 2026

Niels Co-founder

Niels Co-founderRead more

B2B Prospecting

Published on May 19, 2025

BCC Email: Best Practices and B2B Prospecting Alternatives

Mathieu Co-founder

Mathieu Co-founderRead more

Software

Published on Jun 24, 2025

LeadFuze vs Waalaxy: Comprehensive Analysis to Help you Make the Best Choice

Niels Co-founder

Niels Co-founderRead more

Tips and training

Published on May 19, 2025

Master CFBR LinkedIn: Enhance Your Engagement Strategy

Niels Co-founder

Niels Co-founderRead more

AI

Published on Jun 18, 2025

5 Best AI Content Generators 2026 (Marketing + Cold Email)

Niels Co-founder

Niels Co-founderRead more

Made with ❤ for Growth Marketers by Growth Marketers

Copyright © 2026 Emelia All Rights Reserved